Large language models (LLMs) have dominated the natural language processing (NLP) field. But building an NLP system in traditional way however requires substantial efforts. To address this issue, researchers and practitioners start to explore how to prototype NLP systems rapidly via “prompting” technique.

In a new paper Prompt2Model: Generating Deployable Models from Natural Language Instructions, a research team from Carnegie Mellon University and Tsinghua University introduces Prompt2Model, a general-purpose approach that is able to use prompting technique to specify system behavior while resulting in a deployable special purpose model that enjoys all the advantages thereof.

The team summarizes Prompt2Model can benefit the community by serving the following purpose:

- A tool for quickly building small and competent NLP systems: Prompt2Model can be directly used to produce task-specific models that outperform LLMs in a few hours without any manual data annotation or architecture design.

- A testbed for end-to-end, prompt-based model training: Given Prompt2Model’s extensible design, it can offer a platform for exploring new techniques in model distillation, dataset generation, synthetic evaluation, dataset retrieval, and model retrieval.

The Prompt2Model framework seeks to automate the core machine learning development pipeline: data collection, model training, evaluation, and deployment. The framework first leverages dataset retrieval and LLM-based dataset generation to get the user needed label datasets; then the team retrieves pretrained models and fine-tunes them on the collected training split datasets; finally they evaluates the trained model on test split datasets and optionally create a web UI that provides interaction to the model.

Furthermore, the researchers design this framework to be modular and extensible, therefore every module can be abled or disabled by users. Moreover, beside the customization feature, they also provide a reference implementation to enable immediate adoption.

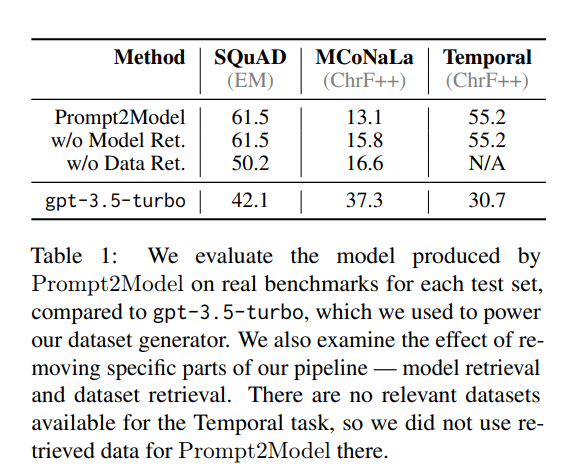

In their empirical study, the team compared models produced by Prompt2Model with baseline LLM gpt-3.5-turbo on SQuAD, MCoNaLa, and Temporal benchmarks. Prompt2Model produces models surpass gpt-3.5-turbo substantially on SQuAD and Temporal while performing significantly worse on on MCoNaLa’s Japanese-to-Python task due to the low diversity in the generated dataset of Japanese queries.

But overall, Prompt2Model delivers small yet accurate models despite using easy-to-use interface as LLMs, and its generated datasets is useful for estimating real-world performance. The team hopes their work can encourage the community to contribute more novel implementations of various components in their framework.

Prompt2Model is available open-source at project’s GitHub. The paper Prompt2Model: Generating Deployable Models from Natural Language Instructions on arXiv.

Author: Hecate He | Editor: Chain Zhang

We know you don’t want to miss any news or research breakthroughs. Subscribe to our popular newsletter Synced Global AI Weekly to get weekly AI updates.

Pingback: CMU & Tsinghua U’s Prompt2Model Generates Deployable Models Following Natural Language Instructions – Ai Headlines

For the best road trip vibes, I can’t recommend Radioq enough. The music, the chat – it’s all you need to keep going. Drive safe and tune in: radioq.com

Our Java assignment ghostwriting service aims to provide you with the most suitable programmers who will tailor a personalized Java assignment ghostwriting https://www.lunwentop.net/java-daixie/ solution specifically for you. Additionally, we will provide detailed comments within the Java code, enabling you to effortlessly complete various Java assignments.

Pingback: CMU & Tsinghua U’s Prompt2Model Generates Deployable Models Following Natural Language Instructions

Seadweer is a premium air compressor supplier and genuine parts distributor for Atlas Copco, Quincy, Ingersoll Rand & Sullair, specializing in compressed air systems at https://www.aircompressoragent.com/