In the three months since its release, ChatGPT’s ability to generate humanlike, coherent and informative responses to a broad range of questions has evolved the OpenAI conversational large language model (LLM) from a curiosity into a magnet for public discussions regarding AI’s pros and cons. While there have been plenty of accolades, there are also serious concerns — particularly regarding ChatGPT’s occasional generation of misleading or factually incorrect responses, which have been described as “hallucinations.” These concerns and ChatGPT’s inability to access the Internet to update its knowledge have led some to suggest that such LLMs are not ready for real-world, mission-critical applications.

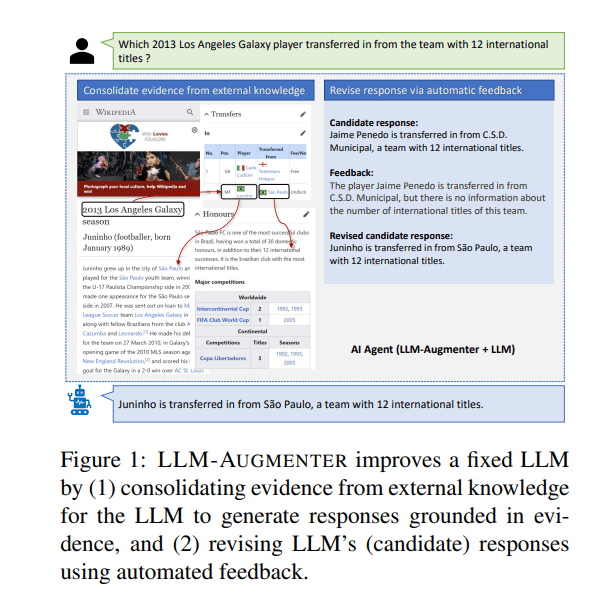

In the new paper Check Your Facts and Try Again: Improving Large Language Models with External Knowledge and Automated Feedback, a Microsoft Research and Columbia University team presents LLM-Augmenter, a system that augments black-box LLMs with a set of plug-and-play modules to significantly improve the factuality of their responses.

The team summarizes their main contributions as follows:

- We present LLM-Augmenter to improve LLMs with external knowledge and automated feedback using PnP modules.

- We perform an empirical study to validate the effectiveness of LLM-Augmenter using two types of mission-critical tasks, information-seeking dialogue and open-domain Wiki question answering (Wiki QA).

The LLM-Augmenter process comprises three steps: 1) Given a user query, LLM-Augmenter first retrieves evidence from an external knowledge source (e.g. web search or task-specific databases). It can also link retrieved raw evidence with relevant context and reason on the concatenation to build “evidence chains.” 2) LLM-Augmenter then queries a fixed LLM such as ChatGPT via a prompt containing the consolidated evidence to enable the LLM to generate a response grounded in evidence. 3) Finally, LLM-Augmenter verifies the generated response and generates a corresponding feedback message, which is used to revise and iterate the ChatGPT query until a candidate response passes verification.

Key components in the LLM-Augmenter architecture are its PnP modules — Working Memory, Policy, Action Executor, and Utility — which are designed to mitigate generation issues such as hallucinations by encouraging the fixed LLM to generate its responses with the help of grounded external knowledge and automated feedback.

The Working Memory module tracks the dialogue state that captures all essential information in the conversation. The Policy module selects the next system action that leads to the best expected reward, which the Action Executor module performs. The Utility module then generates a utility score and corresponding feedback consistent with the context.

The team used ChapGPT as a backbone black-box LLM in their empirical study and evaluated LLM-Augmenter on information-seeking dialogue tasks and the open-domain Wiki question answering (Wiki QA) task. LLM-Augmenter achieved substantial Knowledge F1 score improvements across all tasks in the experiments.

This work demonstrates that the proposed LLM-Augmenter approach can successfully augment black-box LLMs with external knowledge relevant to their conversations with users to significantly mitigate hallucination issues without sacrificing the fluency and informativeness of their generated responses.

Source code and models are available on the project’s GitHub. The paper Check Your Facts and Try Again: Improving Large Language Models with External Knowledge and Automated Feedback is on arXiv.

Author: Hecate He | Editor: Michael Sarazen

We know you don’t want to miss any news or research breakthroughs. Subscribe to our popular newsletter Synced Global AI Weekly to get weekly AI updates.

ChatGPT is so famous now.

I am immensely grateful to the author for their dedication to inclusivity and diversity

This LLM-Augmenter approach sounds really promising! Using external knowledge and automated feedback to reduce hallucinations could make ChatGPT much more reliable for real-world tasks. Excited to see how it performs in practice. If anyone wants to explore practical integrations or support, it’s also handy to know how to contact GasBuddy for any location-based data issues.

Pingback: MIT gets AI to study its own notes and learn faster – Morning Overview

As a resident of Ireland, I’m excited about the advancements in financial analytics tailored for retail investors like us. The innovative approach to predictive analytics allows seamless navigation through digital assets and global equities. By utilizing machine learning models, platforms can connect us with top-tier brokers, ensuring optimal entry prices. For those looking to explore these opportunities, I highly recommend checking out https://neuralink-ai-trading.net neuralink ireland. This could be a game-changer for portfolio growth, aiming to enhance our financial strategies significantly.