The explanatory power of human language has been essential to the evolution of human beings, as it enables us to accurately predict a multitude of phenomena without going through a multitude of potentially painful discovery processes. Is it possible to endow machines with similar abilities?

In the new paper Explanatory Learning: Beyond Empiricism in Neural Networks, a research team from Sapienza University of Rome and OpenAI introduces an explanatory learning procedure that enables machines to understand existing explanations from symbolic sequences and create new explanations for unexplained phenomena. The researchers further propose Critical Rationalist Network (CRN) deep learning models, which employ a rationalism-based approach for discovering such explanations in novel phenomena.

The researchers summarize their main contributions as:

- We formulate the challenge of creating a machine that masters a language as the problem of learning an interpreter from a collection of examples in the form (explanation, observations). The only assumption we make is this dual structure of data; explanations are free strings, and are not required to fit any formal grammar.

- We present Odeen, a basic environment to test EL approaches, which draws inspiration from the board game Zendo (Heath, 2001). Odeen simulates the work of a scientist in a small universe of simple geometric figures.

- We argue that the dominating empiricist ML approaches are not suitable for EL problems. We propose Critical Rationalist Networks (CRNs), a family of models designed according to the epistemological philosophy pushed forward by Popper (1935). Although a CRN is implemented using two neural networks, the working hypothesis of such a model does not coincide with the adjustable network parameters, but rather with a language proposition that can only be accepted or refused in toto.

The proposed Explanatory Learning (EL) framework is treated as a new class of machine learning problems. The team restructures the general problem of making new predictions for a given phenomenon as a binary classification task, i.e., predicting whether a sample from all possible observations belongs to the phenomenon or not.

The team introduces Odeen, a puzzle game environment and benchmark for experimenting with the EL paradigm. Each Odeen “game” can be regarded as a different phenomenon in a universe where each element is a sequence of geometric figures. Players attempt to make correct predictions for a given new phenomenon from few observations in conjunction with explanations and observations of other phenomena.

The researchers then propose Critical Rationalist Networks (CRN) deep learning models, which are implemented using two neural networks and take a rationalist view on the acquisition of knowledge to tackle the EL problem. The team notes that CRN predictions are directly caused by human-understandable explanations available in the output, making them explainable by construction. CRNs can also adjust their processing at test-time for harder inferences, and are able to offer strong confidence guarantees on their predictions.

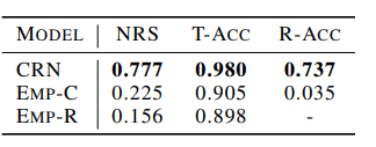

Correct explanation rate of CRN and other empiricist models

In their evaluations, the team compared CRNs to radical (EMP-R) and conscious (EMP-C) empiricist models on their Odeen challenge. The results show that CRNs consistently outperform the other models, discovering the correct explanation for 880 out of 1132 new phenomena for a nearest rule score (NRS) of 77.7 percent compared to the empiricist models’ best of 22.5 percent.

Associated code and the Odeen dataset are available on the project’s GitHub. The paper Explanatory Learning: Beyond Empiricism in Neural Networks is on arXiv.

Author: Hecate He | Editor: Michael Sarazen

We know you don’t want to miss any news or research breakthroughs. Subscribe to our popular newsletter Synced Global AI Weekly to get weekly AI updates.

Pingback: Sapienza U & OpenAI Propose Explanatory Learning to Enable Machines to Understand and Create Explanations – Synced | Prometheism Transhumanism Post Humanism