In-context learning has enabled pretrained language models to achieve impressive zero- and few-shot learning performance without parameter updates. Most conventional in-context learning techniques however restrict the number of training examples, which limits their effectiveness when a large number of examples are available.

In the new paper Structured Prompting: Scaling In-Context Learning to 1,000 Examples, a Microsoft Research team proposes structured prompting. The novel approach breaks through conventional in-context learning length limits, scaling to thousands of examples with reduced computation complexity and superior performance and stability.

In-context learning prompts a language model to detect the desired task and generate an answer for a given input based on instructions and input-output demonstration examples. This is an attractive learning approach because, unlike fine-tuning, it does not require updating the model parameters. The number of demonstration examples is however restricted by the size of the pretrained language model’s context window, which typically only accommodates only 5 to 100 examples. Conventional in-context learning approaches are thus unable to leverage additional available examples to improve model performance.

To address this limitation, the team proposes a novel structured prompting approach. A large number of examples are first divided into groups that are independently encoded by the language model to obtain representations of group-structured exemplars. The encoded representations are then fed into the test input through a rescaled attention mechanism in each layer, and the language model generates the final answer.

The team’s grouped context encoding approach is able to deal with longer sequences, and by splitting examples into groups, the computational complexity can be reduced from quadratic to linear. The researchers also right-align all the groups to ensure they have the same maximum position index and the same relative distance with respect to the test input. In this way, the test input will be adjacent to all exemplars and thus pay equal attention to all of them. These techniques enable the approach to scale in-context learning more efficiently and effectively.

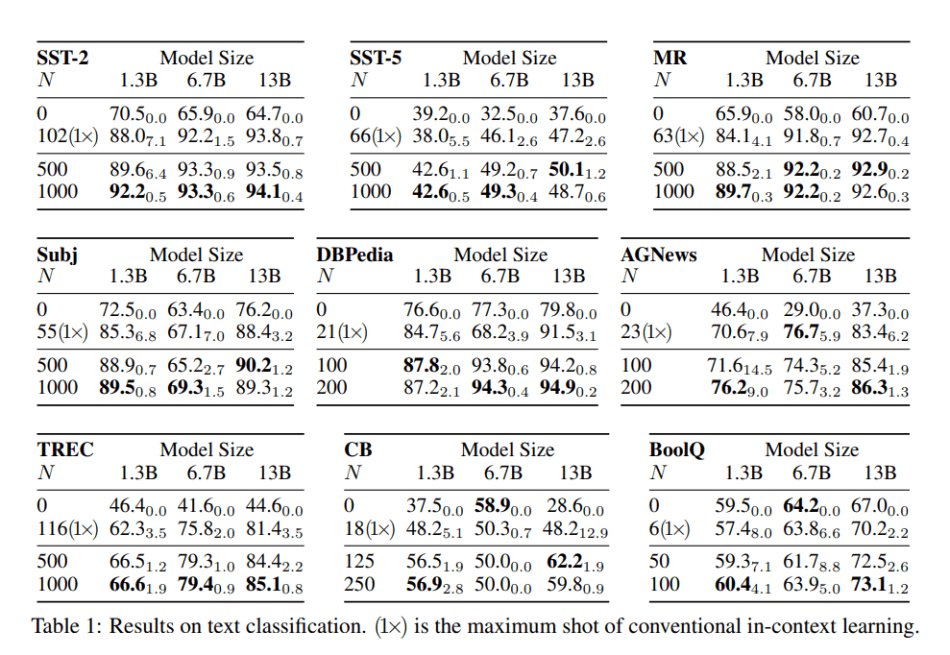

In their empirical study, the team applied structured prompting on open-source GPT-like models and then evaluated them on text classification, multi-choice, and open-ended generation tasks. The results show that structured prompting achieves consistent and significant improvements compared to conventional prompting approaches and makes in-context learning much more stable across different seeds.

This work introduces an effective way to utilize more examples for in-context learning. The proposed structured prompting approach is able to scale up the number of examples with reduced computation complexity and improved end-task performance and stability.

The code is available on the project’s GitHub. The paper Structured Prompting: Scaling In-Context Learning to 1,000 Examples is on arXiv.

Author: Hecate He | Editor: Michael Sarazen

We know you don’t want to miss any news or research breakthroughs. Subscribe to our popular newsletter Synced Global AI Weekly to get weekly AI updates.

Your article is impressive, with in-depth analysis and clear presentation, making it easy for readers to access and understand the issue.

Structured prompting scaling to thousands of examples is exactly what’s needed as models grow. Makes you wonder how much untapped potential sits in those longer context windows.

Of course, when you’re deep in troubleshooting a Microsoft tool that isn’t cooperating, you might find yourself looking for the microsoft customer service phone number https://microsoft.pissedconsumer.com/customer-service.html to get things back on track. Always good to have a backup plan when the tech gets complicated.