Background

Research about Artificial Intelligence (AI) has achieved great progress in recent years. Robots are becoming more and more clever, and they even have a new name: “Super Intelligence”. How can us mere humans communicate with these new friends? The answer lies in conversational systems.

History of Conversational Systems

LUNAR [2] is a prototype natural language question answering system that helps lunar geologists access chemical analysis data on lunar rock and soil composition. It adopts syntactic analysis using heuristic/semantic information to choose the most likely parsing (Augmented Transition Network Grammar was used).

SHRDLU [3] was an early natural language understanding computer program, developed by Terry Winograd at MIT in 1968–1970. In it, the user carries on a conversation with the computer, moving objects, naming collections and querying the state of a simplified “blocks world”, essentially a virtual box filled with different blocks. It was developed based on the belief that “a computer cannot deal with language unless it can understand the subject it is discussing”.

Then, story understanding and generation systems showed up. These systems could infer action, actor and object from human words. These systems have several different types: Script-based understanding, plan-based understanding, dynamic memory and story telling. Below is some typical conversational systems of these types.

Script-based understanding: SAM (Script Applier Mechanism) [4], FRUPM [5]

Dynamic Memory: IPP [6], BORIS [7], CYRUS [8]

Story Telling: TALE-SPIN [9]

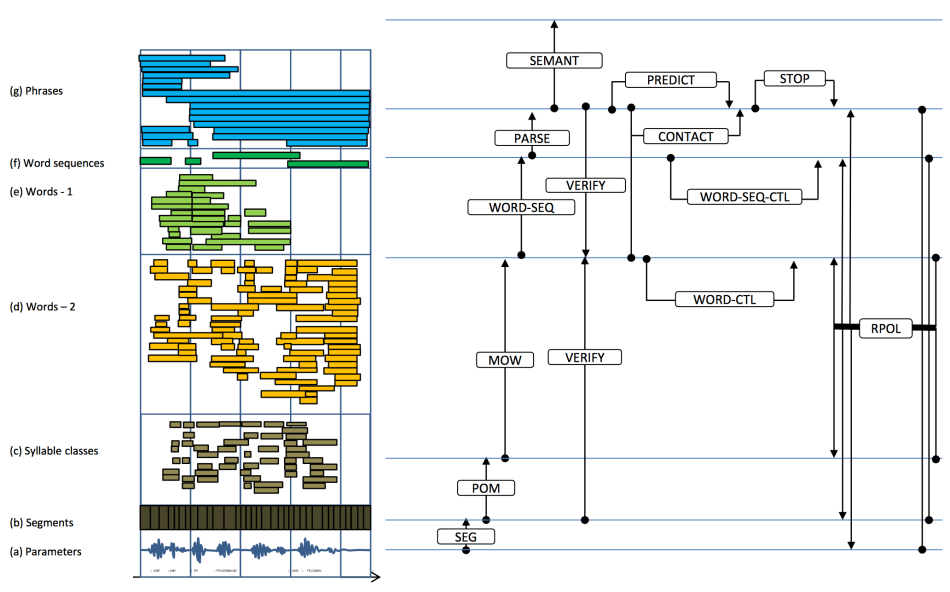

When it came to the 1980s, early speech conversational systems started popping up. “The Hearsay-II Speech-Understanding System” which integrates multiple levels of information processing by knowledge sources coordinated by the blackboard model (Fig. 3): parameter, segment, syllable, word, word-sequence, phrase and database interface. It combines top-down (hypothesis driven) and bottom-up (data-driven) processing.

In the 2000s, conversational systems were equipped with all the basic functions needed to support interactive give and take (not only respond to questions but also ask questions), to recognize the costs of interaction and delay, to manage interruptions effectively, and to acknowledge the social and emotional aspects of interaction. These amazing previous works are quite valuable and composed current conversational systems.

Applications of Current Conversational Systems

chatbot

In recent years, the applications of conversational systems from an application scenario point of view can be divided into five categories: online customer service, entertainment, education, personal assistants, and intelligent quiz.

Online conversational system’s main function is to communicate with the user, and automatically reply to basic user questions about products or services in order to achieve the purposes of reducing operation costs and enhancing user experience. The application scenario is usually home and mobile terminals. In China, representative commercial systems are Little-I robot, and Jingdong JIMI customer service robots. Users can chat with JIMI to get specific product information and to provide feedback on problems in the online shopping experience. It is commendable that JIMI has a certain rejection capability, of being able to know what question he could not answer and when to turn to customer service.

The main function of entertainment conversational systems is chatting with users in the open domain, being the user’s spiritual companionship, giving emotional comfort and psychological counseling and so on. The application scenario is usually social media and children’s toys. Representative systems such as Microsoft XiaoIce, Wechat XiaoWei, Small Yellow Chicken, Love Dolls and so on. In addition to chatting with users in open domain, XiaoIce and XiaoWei also provide services on specific topics, such as weather forecasting and knowledge of life and so on.

Educational conversational systems, based on the content of education, aim to build an interactive language environment to help users learn a language. They guide the users step by step, and help them master the desired skills. The application scenarios usually have interactive learning, training software and intelligent toys. For example, iFLYTEK Hipanda can assist children to learn Tang Poetry.

Personal assistants primarily interact with the conversational system through voice or text and achieve personal affair inquiries, such as weather inquiries, air quality inquiries, calendar reminders and positioning, so as to assist making daily life easier. The application scenario is usually portable mobile terminal devices. Representative systems are Apple Siri, Google Now, Microsoft Cortana. Wherein, Apple Siri appears to lead the commercial development trend of the mobile terminal personal assistant applications.

The main function of intelligent quiz systems includes answering factual questions, and the need to calculate logical problems from users in natural language form. These systems meet the user’s information needs and assist users in decision-making purpose. The application scenario is usually a question and answer service robot system. Typical intelligent question answering systems are IBM Watson, Wolfram Alpha and Magi. [13]

Smart home

Not only limited to chatbots, conversational systems have already become the assistant in our homes. You can access the control center just by saying a few short words. You can ask for the weather or check facts on Wikipedia. Any device that uses electricity can be connected with the smart home assistant and be at your command.

Apple Homepod automatically analyzes the acoustics, adjusts the sound based on the speaker’s location, and steers the music in the optimal direction. Whether HomePod is against the wall, on a shelf, or in the middle of the room, everyone gets an immersive listening experience. If you want to change the song, just ask Siri on HomePod. It’s the most intuitive way to find and play a song. Innovative signal processing allows Siri to hear your requests from afar, even with the music playing at full blast. (https://www.apple.com/homepod/)

Google Home is powered by the Google Assistant. Ask it questions. Tell it to do things. And with support for multiple users, it can distinguish your voice from others in your home so you get a more personalized experience. With your permission, Google Home will learn about you and retrieve your flight information, set alarms and timers, and even tell you about the traffic on your way to work. Google Home connects seamlessly with smart devices like Chromecast, Nest and Philips Hue, so you can use your voice to set the perfect temperature or turn down the lights. (https://madeby.google.com/home/)

Amazon Echo plays all your music from Amazon Music, Spotify, Pandora, iHeartRadio, TuneIn, and more using just your voice. It fills the room with immersive audio and hears you from across the room with far-field voice recognition, even while music is playing. It can also answers questions, reads the news, reports traffic and weather, reads audiobooks from Audible, gives info on local businesses, provides sports scores and schedules, and more using the Alexa Voice Service. (https://www.amazon.com/Amazon-Echo-And-Alexa-Devices/b?ie=UTF8&node=9818047011)

For those who don’t know how amazing these smart home devices are, watch the videos below. https://www.youtube.com/watch?v=hPXS7rC1PWo

https://www.youtube.com/watch?v=dpnxTXILS4s

Leading the Future

Conversational Robot

Conversational systems endowed robots, especially humanoids with the “ability” to communicate with us verbally. The voice of these humanoids are always designed as sweet.

Communication robot Sota (Fig. 6) has already been used for PowerPoint presentations. Japan’s largest telecommunications company, NTT, is rolling out Sota in elderly care facilities to talk with and help care for seniors, and in banks to guide customers as a receptionist.

Humanoid robot Pepper (Fig. 7), designed by Aldebaran Robotics and Softbank, is intended to make people happy, enhance people’s lives, facilitate relationships, have fun with people and connect people with the outside world. It has become the most popular humanoid robot in the world.

ERICA [14], who, it turns out, is 23, is the most advanced humanoid to have come out of a collaborative effort between Osaka and Kyoto universities, and the Advanced Telecommunications Research Institute International (ATR). She is intended to conduct natural communication by doing human-like behavior.

Robots that can talk, are becoming partners in our lives. The era of symbiosis between humans and robots is coming.

Conclusions

Conversational systems interact with people through language to assist, enable, or entertain. Experiencing a long history of development, conversational systems are making our lives more and more convenient and intelligent. Some of us hope to talk to a smart device which can understand all the meanings behind our words. Some of us hope to get comfort from a humaniod when feeling emotional. Don’t worry, the time is coming. But please do not forget to talk to someone who loves you and is right beside you.

Comments from the Author

Conversational systems have a long history of development. They have been improved from voice interface metaphor to human metaphor, rule-based language to spontaneous language, short utterances to mixed utterances, cold to human-like, and predictable to less predictable. There are however several limitations to solve.

- Limited Application. Every conversational system today is designed for specific target of users. How to design a general system, with the same basic functions but with different personalities, knowledge domains and behavior styles, that can be used in more scenarios?

- Unreal Perfection. Have you ever seen a human speak without influence? The utterances are usually designed as unreal perfection. How about adding some fillers in the system’s feedback?

- Fixed Skeleton. You may have noticed that even the smartest system cannot keep updating their knowledge during the conversation. Using unsupervised learning and integrating new corpora may be helpful for designing a highly interactive conversational system.

- Taking the Turn. What I mean is the systems never interrupt to take the turn. If they had already predicted what we are going to say, they can take the turn and give the response immediately, no need to wait for us to finish the whole sentence.

References

[1]. Toyoaki Nishida, Atsushi Nakazawa, Yoshimasa Ohmoto, Yasser Mohammad. Conversational Informatics―Data Intensive Approach with Emphasis on Nonverbal Communication, Springer 2014.

[2]. Woods W A. Progress in natural language understanding: an application to lunar geology[C]//Proceedings of the June 4-8, 1973, national computer conference and exposition. ACM, 1973: 441-450.

[3]. Winograd T. Understanding natural language[J]. Cognitive psychology, 1972, 3(1): 1-191.

[4]. Cullingford, Richard E: SAM and Micro SAM. In Roger C. Schank, & Christopher K. Riesbeck (Eds.), Inside computer understanding. Hillsdale, NJ: Erlbaum, 1981

[5]. DeJong, Gerald F.: Skimming stories in real time: An experiment in integrated understanding (Technical Report YALE/DCS/tr158). New Haven, CT: Computer Science Department, Yale University, 1979

[6]. Lebowitz, Michael : Generalization and memory in an integrated understanding system (Technical Report YALE/DCS/tr186). New Haven, CT: Computer Science Department, Yale University, 1980

[7]. Lehnert, Wendy G., Dyer, Michael G., Johnson, Peter N., Yang, C. J., Harley, Steve: BORIS — An experiment in in-depth understanding of narratives. Artificial Intelligence, 20(1), 15-62., 1983

[8]. Kolodner, Janet L.: Retrieval and organizational strategies in conceptual memory: A computer model. Hillsdale, NJ: Erlbaum, 1984

[9]. Meehan, James: TALE-SPIN and Micro TALE-SPIN. In Roger C. Schank, & Christopher K. Riesbeck (Eds.), Inside computer understanding. Hillsdale, NJ: Erlbaum, 1981

[10]. Lee D. Erman, Frederick Hayes-Roth, Victor R. Lesser, and D. Raj Reddy. The Hearsay-II Speech-Understanding System: Integrating Knowledge to Resolve Uncertainty, ACM Computing Surveys, Volume 12, Issue 2, Pages: 213 – 253, 1980.

[11]. Cassell J. Embodied conversational agents[M]. MIT press, 2000.

[12]. Picard R W, Picard R. Affective computing[M]. Cambridge: MIT press, 1997.

[13]. 聊天机器人技术的研究进展. 张伟男,刘挺. 《中国人工智能学会通讯》,2016年第6卷第1期.

[14]. Glas D F, Minato T, Ishi C T, et al. Erica: The erato intelligent conversational android[C]//Robot and Human Interactive Communication (RO-MAN), 2016 25th IEEE International Symposium on. IEEE, 2016: 22-29.

Author: Yuanchao Li | Editor: Joni Chung

IBRA Taxi Services guarantees prompt and comfortable taxi services to Quebec City airport. Their skilled drivers ensure a smooth journey, making your airport transfer a stress-free experience.

Unleash your inner glamour with Jewel Galore’s earrings that shine like precious gems. From classic to contemporary, adorn yourself with elegance and brilliance.

Osh University is a distinguished medical science university , known for its commitment to advancing healthcare education and research on a global scale.

Shalamar Hospital’s plastic surgery center is dedicated to enhancing your appearance, combining expertise with a caring approach in a modern medical environment.

Pursuing an mbbs medical? Osh University offers an exceptional educational experience, making it the preferred choice for aspiring medical professionals seeking quality and excellence.

Shalamar Hospital is your go-to destination for dermatological care. Our skilled skin specialist offer a wide range of services, ensuring your skin’s health.

The fitted sheets pakistan by Tempo Garments will supply you with the greatest ease. The stuff we sell is soft and permanent because it is constructed using materials of outstanding quality. Our stylish designs will elevate your bedroom and guarantee that you sleep comfortably and stylishly every night.