Transformer architectures have in recent years advanced the state-of-the-art across a wide range of natural language processing (NLP) tasks, and vision transformers (ViT) are now increasingly applied in the field of computer vision. But these successes come with a cost, as transformers’ quadratic complexity over input length results in very high energy consumption, limiting their research and development and industrial deployment.

In the new paper HyperMixer: An MLP-based Green AI Alternative to Transformers, a team from Switzerland’s Idiap Research Institute proposes a novel multi-layer perceptron (MLP) model, HyperMixer, as an energy-efficient alternative to transformers that retains similar inductive biases. The team shows that HyperMixer can achieve performance on par with transformers while substantially lowering costs in terms of processing time, training data and hyperparameter tuning.

The team summarizes their main contributions as:

- A novel all-MLP model, HyperMixer, with inductive biases inspired by transformers.

- A performance analysis of HyperMixer against competitive alternatives on the GLUE benchmark.

- A comprehensive comparison of the Green AI cost of HyperMixer and transformers.

- An ablation demonstrating that HyperMixer learns attention patterns similar to transformers.

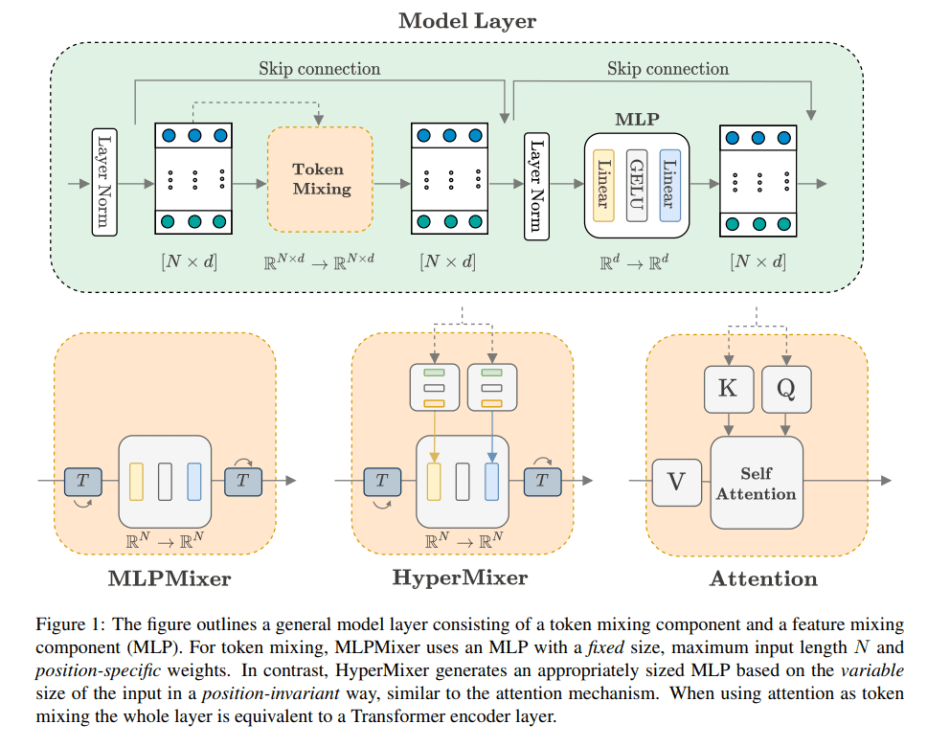

The computer vision community is seeing a surge of research on multi-layer perceptrons (MLPs) as a simpler alternative to transformers. A prominent example is the MLPMixer introduced in 2021 by Tolstikhin et al., which uses one MLP for token mixing and one for feature mixing. The Idiap researchers however question MLPMixer’s suitability as a transformer replacement, as its token mixing MLP learns a fixed-size set of position-specific mappings, arguably making it too detached from the inductive biases needed for effectively tackling NLP tasks.

The proposed HyperMixer dynamically creates a token mixing MLP using hypernetworks, overcoming two challenges restricting conventional MLPs: 1) The input no longer has to be of fixed dimensionality, and 2) The hypernetwork models the interaction between tokens with shared weights across all positions in the input, which ensures the model’s ability to learn generalizable rules that reflect the structural relationship between entities, aka systematicity.

HyperMixer learns to generate a variable-size set of mappings in a position-invariant way, similar to the attention mechanism in transformers, but also reduces the quadradic complexity to linear, making it a competitive alternative for training on longer inputs.

The team evaluated HyperMixer on the sentence-pair classification tasks QQP (Iyer et al., 2017), QNLI (Rajpurkar et al., 2016), MNLI (Williams et al., 2018), and SNLI (Bowman et al., 2015); and on a single-sentence classification task (SST2, Socher et al., 2013). They compared HyperMixer with baseline models MLPMixer, gMLP, transformers, and FNet.

The results can be summarized as:

- HyperMixer outperforms MLPMixer and all non-transformer baselines on NLP tasks on all datasets (apart from SST-2, where no model clearly wins), and especially on those tasks that require modelling the interactions between tokens.

- HyperMixer has similar inductive biases as transformers but is considerably simpler conceptually and in terms of computational complexity. It can be seen as a Green AI alternative to transformers, reducing the cost in terms of single-example processing time, required dataset size, and hyperparameter tuning.

- Due to its inductive biases mirroring those of transformers, HyperMixer learns patterns very similar to transformers’ self-attention mechanisms.

The resource-hungry nature of today’s large pretrained transformer language models has led the machine learning research community to explore methods that lead to a more Green AI paradigm. The proposed HyperMixer takes an important step in this direction by demonstrating the same inductive biases that have made transformers so successful for natural language understanding but at a much lower cost.

The paper HyperMixer: An MLP-based Green AI Alternative to Transformers is on arXiv.

Author: Hecate He | Editor: Michael Sarazen

We know you don’t want to miss any news or research breakthroughs. Subscribe to our popular newsletter Synced Global AI Weekly to get weekly AI updates.

Pingback: Текущие новости - Блог ШСМ