It took humans hundreds of thousands of years to figure out how to fly. But long before aircraft were developed, cultures had already formed the concept of a “bird’s-eye view.” In recent years technology has democratized the bird’s-eye view and flown our vision beyond imagination. The question is timeless: What’s happening down there?

Thanks to quadcopters, GoPros, and YouTube, there is now plenty of available UAV (unmanned aerial vehicle) video data. In the aftermath of earthquakes and floods, UAVs are used for estimating damage and delivering aid in areas too dangerous or difficult for human responders to access. UAVs are used to monitor wildlife in remote areas, while urban planners and other city officials, etc. use UAV cityscape data to better understand changing local environments. And of course, countless videographers and hobbyists regularly record and upload their UAV flights.

However, even with sufficient UAV video data and recent advances in computer vision research and technologies, it remains difficult to automate the process of understanding what’s happening in aerial video footage. To help with automatic interpretation, a team of researchers from the German Aerospace Center (DLR) and the Technical University of Munich recently published the paper ERA: A Dataset and Deep Learning Benchmark for Event Recognition in Aerial Videos.

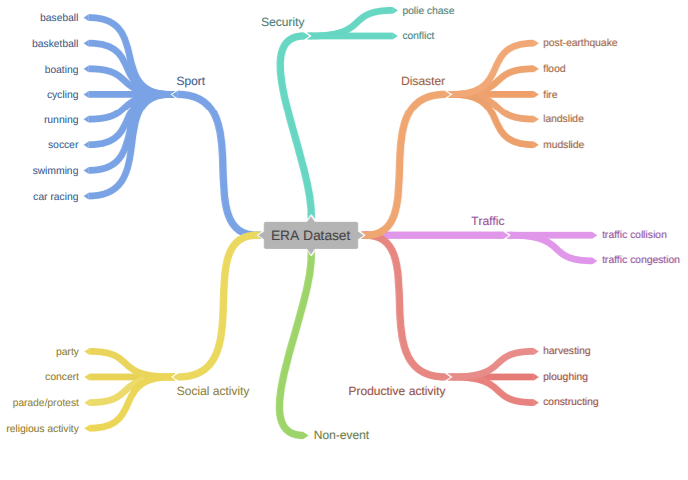

The study introduces an Event Recognition in Aerial video (ERA) dataset comprising 2,866 aerial videos collected from YouTube and annotated with labels from 25 different classes corresponding to an event that can be seen unfolding over a period of five seconds. Researchers say they chose five seconds as their timeframe because the minimal duration of human short-term memory is 5 to 20 seconds.

Although most existing UAV understanding datasets focus on human-centric event recognition, human action detection, and disaster event recognition, the researchers set out to collect more general aerial videos. They gleaned 24 “Event” categories from Wikipedia and added a new “non-event” category for everything else. The 25 categories were grouped as Security, Disaster, Traffic, Productive activity, Social activity, Sport, and Non-event.

Unlike previous datasets for aerial video understanding that tend to be collected in well-controlled environments, researchers also included videos shot under poor conditions — for example with low spatial resolution, extreme illumination conditions, bad weather conditions, etc.

In their experiments the researchers used a collection of single-frame classification models and video classification models as baselines. In classifying parade/protest and concert class events, a Temporal Relation Network (TRN) demonstrated high-scoring predictions. TRN is designed to recognize human actions by reasoning about multiscale temporal relations among video frames, and its excellent performance suggests that efficiently exploiting temporal clues helps in distinguishing events of minor inter-class variances.

Meanwhile, the unsurprising finding that extreme conditions can disturb predictions validated researchers’ caution regarding videos shot at night, snow scenes, etc. Such videos tended to be misclassified, presenting a new challenge for future studies.

The paper’s first author Lichao Mou told Synced “To our best knowledge, the proposed dataset, ERA, is the first dataset for generic event understanding from a bird’s eye view.” The paper notes that as the first of its kind, ERA is bound to experience growing pains. For instance, the imbalanced distribution across the dataset’s 25 classes could be a challenge for developing unbiased models.

The ERA dataset release comes amid continuing concerns regarding both government and private drone use, especially in urban environments. The New York Police Department acquired a fleet of 14 drones in December 2018, which it said would be used to monitor events such as concerts or in hostage and other crime scene investigations. The New York Civil Liberties Union responded with a statement asserting the policy ”opens the door to the police department building a permanent archive of drone footage of political activity and intimate private behavior visible only from the sky”

What’s happening down there? The ERA dataset can be a valuable tool in the development of AI models that understand the view from above better than we’ve ever imagined. As with many frontier technologies, the potential benefits must be balanced with respect for legitimate privacy concerns, which is yet another challenge facing researchers.

The paper ERA: A Dataset and Deep Learning Benchmark for Event Recognition in Aerial Videos is on arXiv, while additional information and updates can be found on the ERA dataset website.

Journalist: Fangyu Cai | Editor: Michael Sarazen

Texting can only get you so far when trying to build a real connection online with a stranger. The video chat features on Visit Website helped me verify who I was talking to and build chemistry before we ever met in person. It feels much safer and more personal than swiping endlessly on photos that might be five years old or heavily edited. Seeing someone’s genuine smile in real time changes everything and builds trust much faster.

Over the past decades, humanity has truly made great progress in many areas. By the way, I would like to share a useful find — the medical resource https://ways2well.com/blog/non-surgical-treatments-for-lower-back-pain-effective-options Ways2Well. It can be considered a convenient source of information about modern treatment approaches. The website publishes materials about innovative therapeutic methods, including regenerative medicine and the use of stem cells. This format helps readers better understand new medical directions and see the future potential of medical technologies.

In recent years, humanity has made noticeable progress in the fields of science and medicine. I would also like to mention the Ways2Well https://ways2well.com/blog/non-surgical-treatments-for-lower-back-pain-effective-options resource, which can be used as an informative platform about modern treatment approaches. The site publishes articles on innovative therapy methods, including regenerative medicine and stem cell applications. This format helps readers better understand emerging medical trends and see the potential direction of technological development.