Google’s deep learning TensorFlow platform has added Differentiable Graphics Layers with TensorFlow Graphics, a combination of computer graphics and computer vision. Google says TensorFlow Graphics can solve data labeling challenges for complex 3D vision tasks by leveraging a self-supervised training approach.

A computer graphics pipeline usually requires representation of 3D objects and their absolute position in the scene, material description, light, and camera. The renderer then uses the scene description to generate a composite render. In contrast, computer vision models take images as inputs and then infer the parameters of the scene to predict objects and their materials, three-dimensional position and orientation.

TensorFlow Graphics loops the two pipelines so that the vision system extracts scene parameters and the graphics system renders the image. The rendered image result matching the original image means the vision system is well-trained. In this setup, computer vision and computer graphics work together to form a machine learning system similar to a auto-encoder that can be trained in a self-supervised manner.

Below are some major features of TensorFlow Graphics.

TensorBoard 3D

To enable users to visually debug, TensorFlow Graphics adds a TensorBoard plug-in that can interactively display 3D meshes and point clouds.

TensorFlow Graphics Functionalities

TensorFlow provide colab notebooks covering image rendering operations including object pose estimation, interpolation, object materials, lighting, non-rigid surface deformation, spherical harmonics, and mesh convolutions. All these functionalities are ordered by difficulty.

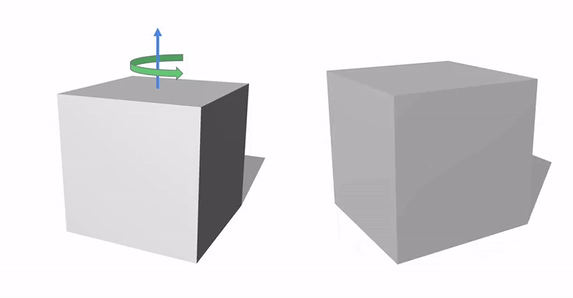

Object pose estimation plays a key role in many applications, particularly robotics. For example a robotic arm grabbing objects requires accurate pose estimation of these objects relative to the robotic arm. The example below shows the process used to train a rotation form in a neural network to predict the rotation and translation of an observed object.

Camera models play a key and fundamental role in computer vision, and they have great impact on the appearance of 3D objects projected onto the image plane. The cube below seems to zoom in and out, but in fact these changes are simply due to changes in camera focal length.

Reflectance defines the interaction of light with an object to illustrate the object’s appearance. Accurately predicting material properties lays the foundation for many tasks.

TensorFlow Graphics also offers advanced functionalities such as spherical harmonics rendering, environment map optimization, semantic mesh segmentation, etc.

The global market for 3D sensors such as built-in depth sensors on smartphone and autonomous car LiDARs is rapidly growing. These sensors output 3D data in the form of point clouds or grids. It is much more difficult for neural nets to perform convolution operations on these characterizations than on regular grid structures due to their structural irregularities. TensorFlow Graphics therefore introduces mesh segmentation, which offers two 3D convolution layers and a 3D pooling layer to enable the network to perform semantic part classification training on the grid.

TensorFlow Graphics is compatible with TensorFlow 1.13.1 and above. Click here for the API documentation and installation instructions.

Author: Yuqing Li | Editor: Michael Sarazen

0 comments on “Computer Graphics + Computer Vision = TensorFlow Graphics”