Compositing multiple objects from different sources into one image is a formidable computer graphics challenge involving relative scaling, spatial layout, occlusion, and viewpoint transformation of objects from the 3D world to a 2D image. Now the task can be accomplished by an AI-powered Compositional Generative Adversarial Network (GAN).

A new paper from a team of UC Berkeley researchers addresses the compositionality problem with no explicit prior information provided regarding the layout of objects. The key to this method is to fix one object while resizing and transforming other objects according to their complex joint interactions. These are learned by AI during a process of individual object combination and separation in the image.

The authors combine a composition conditional GAN with a decomposition conditional GAN to build a self-consistent composition-decomposition network for the model. A Spatial Transformer Network (STN), Relative Encoder-Decoder Appearance Flow Network (RAFN), self-supervised inpainting network and inference refinement network are also principal components of the system. To optimize the creation of realistic images, multiple L1 loss functions and a GAN loss with gradient penalty are applied as regularization schemes.

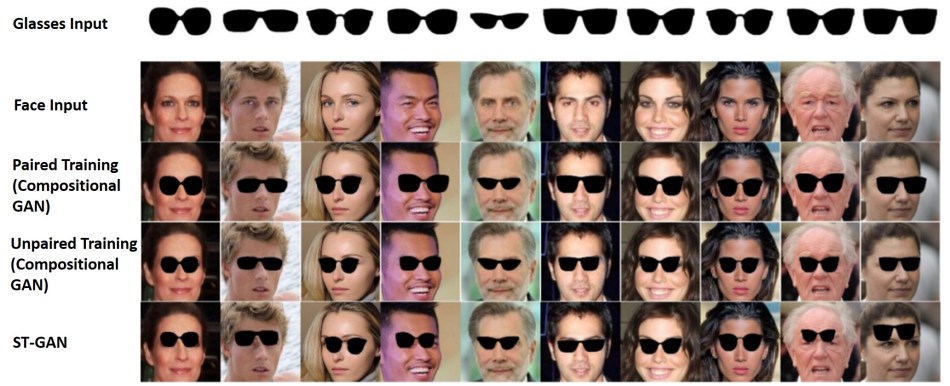

Because individual objects may or may not have their corresponding example composed image as examples in the training dataset, the authors use different methods for “paired” and “unpaired” scenarios. Paired training starts by changing the viewpoint of one object according to that of the other objects with the RAFN; while unpaired training begins with reconstructing segmentations of the joint image with the inpainting network. After proper object composition, the final refinement step performs image sharpening and artifact removal.

The authors have demonstrated Composing a Chair with a Table and Composing a Bottle with a Basket tasks, with outputs shown after each critical module.

The results were evaluated by 60 people, with 71 percent preferring the refinement network method for image generation in the chair-table case, and 64 percent preferring it in the basket-bottle case. In both cases, just 57 percent said paired dataset training showed better results than unpaired training, indicating the feasibility of unpaired training when a paired dataset is not accessible.

The researchers also performed a direct comparison between compositional GAN (with both paired and unpaired training) and previously proposed Spatial Transformer GAN (ST-GAN) via the task of Composing Faces with Glasses. The former notably outperformed the latter, as ST-GAN simply conducted object adaption into a fixed background image without considering the interaction between the two targeted objects in a 3D world.

The paper Compositional GAN: Learning Conditional Image Composition was published on arXiv in July. Detailed code and dataset information will soon be released at GitHub.

Source: Synced China

Localization: Tingting Cao | Editor: Michael Sarazen

0 comments on “Bottle into Basket: Realistic Object Composition with AI”