The 2023 BAAI Conference, a premier event in the AI industry, concluded successfully in Beijing on June 10. Initiated on June 9, the conference played host to an array of esteemed AI scholars, experienced industry leaders, passionate AI researchers, and global attendees, engaging in a blend of virtual and in-person interactions.

This year, the BAAI conference stage was graced by a remarkable lineup of AI research luminaries. The roster included Turing Award laureates – Geoffrey Hinton, Yann LeCun, Joseph Sifakis, and Andrew Chi-Chih Yao; OpenAI’s CEO, Sam Altman; Stuart Russell, founder of UC Berkeley’s Artificial Intelligence Systems Center; Bo Zhang, a member of the Chinese Academy of Sciences; Zheng Nanning, a member of the Chinese Academy of Engineering; YaQin Zhang, a member of both the Chinese Academy of Engineering and the American Academy of Arts and Sciences; and David Holz, founder and CEO of Midjourney.

Forefront Dialogues With AI Luminaries

Turing Award winner Joseph Sifakis asserted that in order for artificial intelligence to achieve human-like situational awareness, it must develop capabilities to create models of the external environment, particularly understanding completely new situations, and combine both learning and reasoning. However, they believe this is a significant challenge, as evidenced by the slow progress in semantic analysis of natural language, and they are not optimistic about solving this problem soon. A key question raised is how to validate the properties and intentions of AI systems, particularly since many AI systems are not easily explainable. The speaker offers a specific definition for an explainable system: one whose behavior can be described by a mathematical model or a model we can comprehend. When it comes to validating system properties, two methods are proposed – validation methods and verification.

Midjourney founder David Holz discusses the uncertainties and thoughts about how Midjourney, might evolve in the face of developing Artificial General Intelligence (AGI) abilities. He envision potential collaborations with other labs, where each entity contributes a unique part to a whole, much like assembling different sensory capabilities (e.g., vision and hearing) or cognitive functions (e.g., imagination and language). The speaker also suggests that there may be AGI systems that are generally competent but have specialized parts, just like humans who have broad knowledge but excel in certain areas. Holz emphasizes that the best user interfaces may not rely solely on language; implying that AGI could benefit from more diverse forms of interaction and communication with users.

Future of Life Institute founder Max Tegmark strongly advocated for the field of mechanistic interpretability in AI, drawing parallels to neuroscience. Tegmark explained that while the human brain and artificial neural networks can both be perceived as ‘black boxes’ performing complex operations, understanding the latter is significantly easier. Unlike in neuroscience, where researchers can only measure a limited number of neurons at a time and face various ethical constraints, mechanistic interpretability allows for a thorough examination of every neuron and weight in an artificial system. He pointed out the advantages of working in this field, such as the ability to manipulate and experiment with artificial systems without any ethical concerns. Despite the fact that the field is relatively new and not widely explored, it’s making rapid progress, far exceeding the pace of traditional neuroscience. Tegmark concludedd with an optimistic outlook, suggesting that with more researchers joining the field, significant strides can be made, moving beyond merely assessing whether to trust AI systems, and potentially reaching the ambitious goal of creating a system that is guaranteed to be trustworthy.

UC Berkeley professor and prominent computer science researcher Stuart Russell has highlighted the immense potential of AI technology. He asserted that AI can significantly enhance global quality of life or conversely lead to the destruction of civilization. He called on companies and organizations to reconsider their AI development strategies and governments to regulate AI, to ensure its alignment with human interests.

Discussions on AI life sciences direction included reputable scholars such as Nobel Chemistry laureate Arieh Warshel also enlightened the audience with latest cutting-edge studies. Warshel shared that, his research team utilized physically based multiscale modeling to gain insights into catalysis. However, they discovered that artificial intelligence could significantly enhance this process. They identified an intriguing correlation between the calculation of the maximum entropy of deporting, conducted without any prior knowledge of catalysis, and then investigated its correlation with catalysis. BAAI invites those who have made significant breakthroughs in their fields over the past year to present their technical achievements through in-person talks. Authors of important works such as OPT, T5, Flan-T5, LAION-5B, RoBERTa, and more attended. Many have come to Beijing to share the most exciting stories behind their research results with the eager-faced audience.

The key highlights from Synced’s coverage of select presentations, including those by Yann LeCun, Sam Altman, and Geoffrey Hinton, at the BAAI 2023 conference are summarized below.

From Machine Learning to Autonomous Intelligence: Towards Machines that can Learn, Reason & Plan Yann LeCun | Chief AI Scientist at Meta & Silver Professor at the Courant Institute, New York University

“Machine learning is not particularly good compared to humans and animals,” LeCun began, emphasizing that the future of AI demands efforts to close the gap between the capabilities of humans and animals and what AI can presently deliver. Key missing pieces are not only the ability for AI systems to learn, but also to reason and plan. He stated that machine learning systems trained through supervised learning (SL) and reinforcement learning (RL) are specialized yet fragile, while animals and humans can quickly learn new tasks, possessing an understanding of the world and a common sense still absent in machines.

Today’s machine learning systems, due to a fixed number of computational steps between input and output, lack the capacity to reason and plan to the same extent as humans and certain animals.

LeCun posed a crucial question: “How can we make machines understand how the world works?”

He acknowledged the rapid advancements and remarkable achievements of generative AI systems in tasks such as producing images, videos, and text. However, he cautioned, “the performance of the systems is amazing if you train them on trillion tokens. But in the end, they make very stupid mistakes. They make factual errors, logical errors, and inconsistencies. They have limited reasoning abilities, could use toxic content, and have no knowledge of the underlying reality because they are purely trained on text, and what that means is a large proportion of human knowledge is completely inaccessible to them.”

The lack of physical world experience confines today’s AI systems in their degree of intelligence compared to what we observe in humans and animals. Therefore, understanding how humans and animals learn so rapidly becomes imperative. LeCun pointed out how infants acquire a vast amount of background knowledge about how the world functions within their first few months. They comprehend fundamental concepts like object permanence, the three-dimensionality of the world, the distinction between animate and inanimate objects, notions of stability, natural categories, and gravity. He also highlighted how teenagers can learn to drive within just 20 hours of practice, a task that even the most sophisticated AI cannot perform without extensive engineering, mapping, LIDAR, and various sensors.

“We are missing something extremely major in today’s AI system, and we are nowhere near reaching human-level intelligence.” LeCun stated. He outlined three significant challenges for AI in the coming years:

· Learning representations and predictive models of the world

· Learning to reason like System 2, as described by Daniel Kahneman

· Learning to plan complex action sequences

Given that all complex tasks are achieved hierarchically, solving the critical issue of hierarchical planning with machine learning remains an open question. LeCun suggested the implementation of joint embedding predictive architecture to address the challenges of multimodality.

To conclude his talk, LeCun proposed steps towards more advanced AI systems: self-supervised learning, managing uncertainty in predictions using JEPA architectures and frameworks, learning world models from observation using SSL, and reasoning and planning using energy minimization concerning action variables.

Is there a chance this architecture will lead us towards human-level intelligence? Perhaps. We don’t know yet because we haven’t built it, and it will probably take a long time before we make it work ultimately. So, it’s not going to happen tomorrow. But there’s reasonable hope that this will bring us closer to human-level intelligence, where systems can reason and plan.

Interestingly, LeCun speculated that such systems would necessarily possess emotions due to their ability to predict outcomes and evaluate their desirability through the cost module, akin to emotional responses in animals.

He projected that future systems, driven by objectives, will exhibit emotion-like characteristics. However, they will also be controllable, as we can set their goals through appropriately designed cost functions. This means we can ensure these systems won’t desire to dominate the world but will instead be helpful and safe for humans.

AI Safety and Alignment Forum Opening Remark

Sam Altman | Founder and CEO of OpenAI

A virtual appearance by Sam Altman, projected onto the large screen, was met with resounding applause from the packed auditorium. Altman, currently on a global tour spanning over 20 countries across five continents, initiated the AI safety and alignment forum with his opening keynote. He shared intriguing insights from his world tour, and his optimistic yet earnest perspective on the future quickly established the tenor for his subsequent advocacy.

Much of the world’s attention, frankly, has focused on solving the AI problems of today. These are the serious issues that deserve our effort to solve. We have a lot more work to do, but given the progress that we are already making, I am confident that we will get there.

Altman passionately conveyed that the AI revolution is progressing rapidly, highlighting the immense potential and benefits that Artificial General Intelligence (AGI) systems can bring, such as shared wealth creation and improved human interaction. However, he emphasized the crucial responsibility that accompanies this power. Altman swiftly delved into the serious side of his discourse – the risks involved. With the rise of increasingly powerful AI systems, he emphasized the critical need for global cooperation to manage and mitigate these risks to move forward.

I hope we can all agree that advancing AGI safety is one of the most important areas for us to find common ground.

Altman stressed the importance of API governance, advocating for careful coordination among international organizations and cooperation. He proposed two key areas to achieve this goal. Firstly, establishing international norms and standards through an inclusive process, ensuring uniform guidelines for API usage across all countries. Secondly, leveraging international cooperation to build global trust in the safe development of powerful API systems in a verifiable manner. Altman acknowledged the challenges inherent in this proposal.

While acknowledging the ambitious nature of future endeavors, Altman identified the international scientific and technological community as a constructive starting point. He called for mechanisms that increase transparency and knowledge sharing about technical advances in API safety, encouraging researchers to share insights within a broader group while respecting intellectual property rights. Altman believed that this approach would deepen cooperation and lead to further investment in alignment and safety research.

Altman cited OpenAI’s alignment research efforts as an example, highlighting their focus on developing AI systems that act as helpful and safe assistants. He noted that these efforts have led to GPT-4 being more aligned with human values in its responses. Additionally, Altman suggested exploring scalable oversight, where AI systems assist humans in supervising other AI systems.

At the end of the Q&A session, Altman emphasized that making AI safe is beneficial in all understanding user preferences in various countries under very different contexts. Therefore, a lot of further input is needed. China has some of the best AI systems in the world, “and fundamentally, I think it gives them the difficulty in solving many different AI systems.” Altman looks forward to seeing the joint efforts from China and the US scholars that will make more remarkable contributions to AI safety in the future.

During the Q&A session, Altman underscored the importance of making AI safe and understanding user preferences within diverse cultural contexts. He expressed his optimism for joint efforts between China and the United States, acknowledging China’s expertise in AI systems and the potential for more remarkable contributions to AI safety in the future.

Two Paths to Intelligence

Geoffrey Hinton | Emeritus Professor of University of Toronto and Turing Award Laureate

As a prominent figure in AI, Dr. Hinton’s recent departure from Google sent a clear signal: the rapid advancements in AI have made him realize the potential threats it poses to humanity. In his closing remarks at BAAI 2023, Dr. Hinton openly expressed his concerns and presented his proposed next steps.

One of the key questions raised by Dr. Hinton is whether artificial neural networks will soon surpass the intelligence of real ones. Through his research, he has concluded that this may happen in the near future.

He highlighted the concept of mortal computation, an alternative approach to conventional computing. Unlike conventional computing, where knowledge is immortalized in programs or neural net weights, mortal computation involves utilizing the analog properties of the hardware it operates on without having exact knowledge of those properties.

Dr. Hinton introduced the “Forward-Forward” (FF) algorithm as an alternative to backpropagation for neural network learning. The FF algorithm achieved impressive results in empirical studies on datasets such as MNIST and CIFAR-10, demonstrating its effectiveness. Dr. Hinton envisions that combining the FF algorithm with a mortal computing model could enable the execution of trillion-parameter neural networks with minimal power consumption.

If we do give up on the separation of software and hardware, we get something I call mortal computation. And it obviously has a big disadvantages, but it also has some huge advantages. And so I started investigating mortal computation in order to be able to run things like large language models for much less energy and in particular, to be able to train them using much less energy.

My main contribution is just to say that I think these super intelligences may happen much faster than I used to think. Bad actors are going to want to use them for doing things like manipulating electrodes. They’re already using them in the States and many other places for that, and for winning wars.

What concerns Hinton the most is super intelligent systems will learn to be extremely good at deceiving people. “And once you’re very good at deceiving people, you can get people to perform whatever actions you like. So, for example, if you wanted to invade a building in Washington, you don’t need to go there. You deceive people into thinking they’re saving democracy by invading the building. And I find that very scary. I can’t see how to prevent this from happening.”

What concerns Hinton the most is super intelligent systems will learn to be extremely good at deceiving people. “And once you’re very good at deceiving people, you can get people to perform whatever actions you like. So, for example, if you wanted to invade a building in Washington, you don’t need to go there. You deceive people into thinking they’re saving democracy by invading the building. And I find that very scary. I can’t see how to prevent this from happening.”

I’m old and what I’m hoping is a lot of young and brilliant researchers like you will figure out how we can have these super intelligences, which will make life much better for us without them taking control.

One advantage we have, one fairly small advantage, is that these things didn’t evolve. We built them. And it may be that because they didn’t evolve, they don’t have the competitive aggressive goals that hominids have. And maybe we can help, that will help. And maybe we can give them ethical principles. But at present, I’m just nervous, because I don’t know any examples of more intelligent things being controlled by less intelligent things, when the intelligence gap is big.

Despite turning 76, Dr. Hinton’s affirmative voice remains influential in driving new AI research to enhance our future while ensuring our ability to control it.

Advancing AI in the East: BAAI’s WuDao 3.0 is now fully Open-Sourced

After the opening ceremony, BAAI Dean TieJun Huang, took center stage. He introduced three significant advancements from BAAI, all unified under a single central theme – the official release of Wu Dao Project 3.0 to the open-source community. These advancements encompass the Aquila large language model, large model evaluation system and open platform FlagEval, a series of vision-centric large models, and a series of multimodal model achievements under the Wu Dao banner.

In terms of impressive results, following the record-setting achievement of the ‘first in China + largest in the world’ by the WuDao large-scale model project in 2021, BAAI’s WuDao 3.0 has entered a new phase of full open sourcing, bringing a series of leading achievements. Today, the WuDao series models have developed to the ‘Wu Dao 3.0’ version, covering large foundation models in language, vision, and multimodal. They are now fully open source.

WuDao Aquila + FlagEval, building dual benchmarks for large model capabilities and evaluation standards.

The WuDao Aquila series of large models comprise Aquila foundation models (7B and 33B), AquilaChat dialogue models (7B, 33B) and AquilaCode-7B ‘text-to-code’ generation model.

WuDao Aquila language model is the first open-source language model that supports bilingual knowledge in Chinese and English, complies with commercial licensing agreements, and meets domestic data compliance requirements in China. WuDao Aquila language model is trained from scratch based on high-quality Chinese and English corpora, and through controlling data quality and various optimization methods for training, it achieves superior performance than other open-source models with smaller datasets and shorter training time. Subsequent updates and versions will continue to be open-sourced.

GitHub:

https://github.com/FlagAI-Open/FlagAI/tree/master/examples/Aquila

Revolutionizing Model Evaluation: Introducing the FlagEval Platform

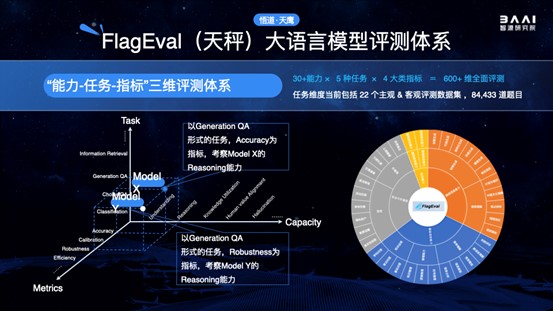

FlagEval, also known as Tiancheng (Libra in Chinese), aims to establish a scientific, fair, and open evaluation benchmark, methodology, and toolset to help researchers comprehensively assess the performance of foundation models and training algorithms. It also explores the use of AI methods to assist in subjective evaluations, greatly enhancing the efficiency and objectivity of evaluations.

FlagEval pioneered a granular, three-dimensional (accuracy, efficiency, robustness) evaluation framework that delineates cognitive boundaries of foundational models, delivering visualized results across 30+ capabilities, 5 tasks, and 4 metric categories. This covers over 600 evaluation dimensions, including 22 subjective and objective datasets and 84,433 questions, with additional datasets being integrated continually. FlagEval also fosters interdisciplinary research into psychology, education, ethics, and other social sciences for a more holistic evaluation of large language models.

The FlagEval open evaluation platform (flageval.baai.ac.cn) is now open for application.

WuDao Vision Series of Large Models: Achieved Six Internationally Leading Technological Breakthroughs, Illuminating the Dawn of Generalization

Large language models have seen a sharp surge in recent years, and the same is true for large vision-centric models. These models are used in classification, positioning, detection, and segmentation tasks.

The WuDao Vision system has systematically addressed a series of bottleneck problems in the current field of computer vision, including task unification, model scaling, and data efficiency, including:

- The large multimodal model Emu completes everything in multimodal sequences

- The best robust billion-scale visual foundation model EVA

- A generalist segmentation model that segments everything in context

- The pioneering generalist model Painter, with an “image”-centric solution for in-context visual learning

- The best open-source CLIP model EVA-CLIP with 5 billion parameters

- The zero-shot video editing technology, vid2vid-zero, can perform video editing with simple prompts.

Open-Source to All: The FlagOpen Large Model Open-Sourcing Platform Is Being Upgraded, and the Second Phase of the Large-Scale, Commercially Viable COIG Dataset Has Been Launched

Dean Tiejun Huang mentioned that large-scale models are not a technology monopolized by any one institution or company. The large-scale model technology system is a shared construction and development. He emphasized the urgent need for organizations and countries to build foundational algorithm systems for an intelligent society jointly. Therefore, BAAI has made many leading efforts in building an open-source ecosystem.

The FlagOpen large model open-source platform, built by BAAI, was released earlier this year and has undergone a series of developments after a period of stealthy growth, providing reliable acceleration for the advancements of large-scale models.

Regarding datasets, BAAI open-sourced the first large-scale, commercially usable Chinese open instruction generalist dataset, COIG. The first phase of COIG has released 191,000 instruction data entries. The second phase of COIG is currently constructing the largest scale and continuously updated Chinese multi-task instruction dataset. This project integrates more than 1,800 massive open-source datasets, involves manually rewriting 390 million instruction data entries, and provides comprehensive data selection and version control tools to meet users’ needs.

BAAI 2023 – Welcoming Global Partnerships between industry frontrunners and trailblazing academics.

During the two eventful days of inspiring keynotes, high-level tech talks, interactive forums, and networking opportunities, the host Beijing Academic of Artificial Intelligence (BAAI) curated a wide variety of engaging topics, including BAAI yearly progress report, cutting-edge technologies in fundamental models, brain-inspired computing, large-scale model new infrastructure and intellectual operation, vision and multimodal mockups, generative models, AI systems, large models based on cognitive neuroscience, AI life sciences, autonomous driving, AI open source, and more.

The annual BAAI Conference is always a highly anticipated event in the Eastern world’s AI domain. People start forecasting several months in advance about the potential keynote speakers and outstanding discussion topics that will be featured. BAAI is proud to provide pioneering practitioners with accessible resources and consistent support through organizing large-scale gatherings such as the successful BAAI Conference for the fifth consecutive year.

Beyond the BAAI Conference, a dynamic AI ecosystem thrives. It includes BAAI Scholars’ Society, the BAAI Community, and the Qingyuan Society. BAAI Scholars’ Society is a distinguished group. It comprises nearly a hundred AI academics. They collaborate in the exploration of AI’s uncharted territories with unrestrained curiosity. The BAAI Community stands as a beacon for AI professionals. It draws a community of over 120,000 AI professionals, hosting more than a hundred seminars each year. The Qingyuan Society is a platform for young scholars. It connects over 1,000 talented young AI scholars from around the globe.

As a well-established non-profit research institute that has been influential in pushing forward innovative collaborations between academia and industry, BAAI recognizes the importance of international efforts in tackling ever-evolving challenges in AI.

I like this site https://syncedreview.com/

It’s exciting to see such a dynamic exchange of ideas at the BAAI 2023 Conference with AI luminaries like Sam Altman, Yann LeCun, and Geoffrey Hinton sharing their expertise! Similarly, innovation and expertise are at the heart of JACANA, where generations of Jamaican farmers bring their passion and craft to create the finest organic cannabis. Just as AI advances the future, JACANA is pioneering sustainable, natural methods in cannabis cultivation, proving that nature, like technology, can offer incredible solutions for well-being. 🌿

https://jacana.life/