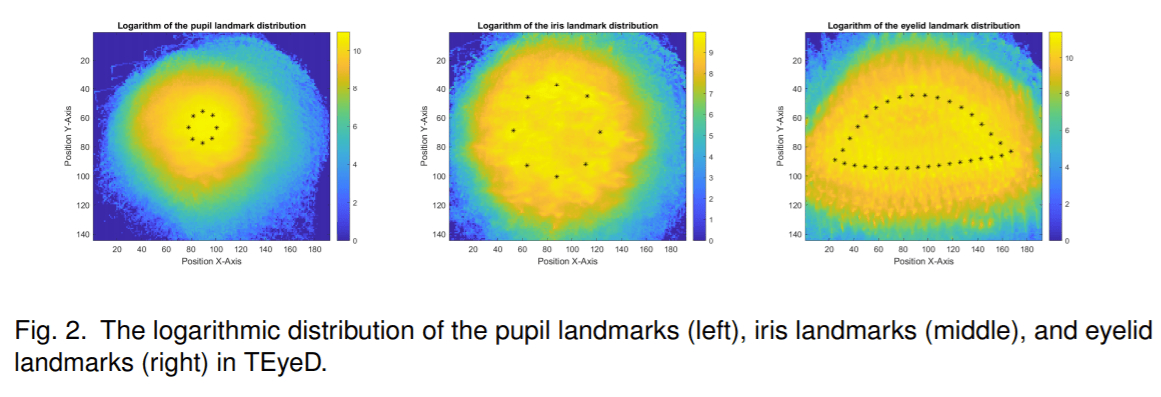

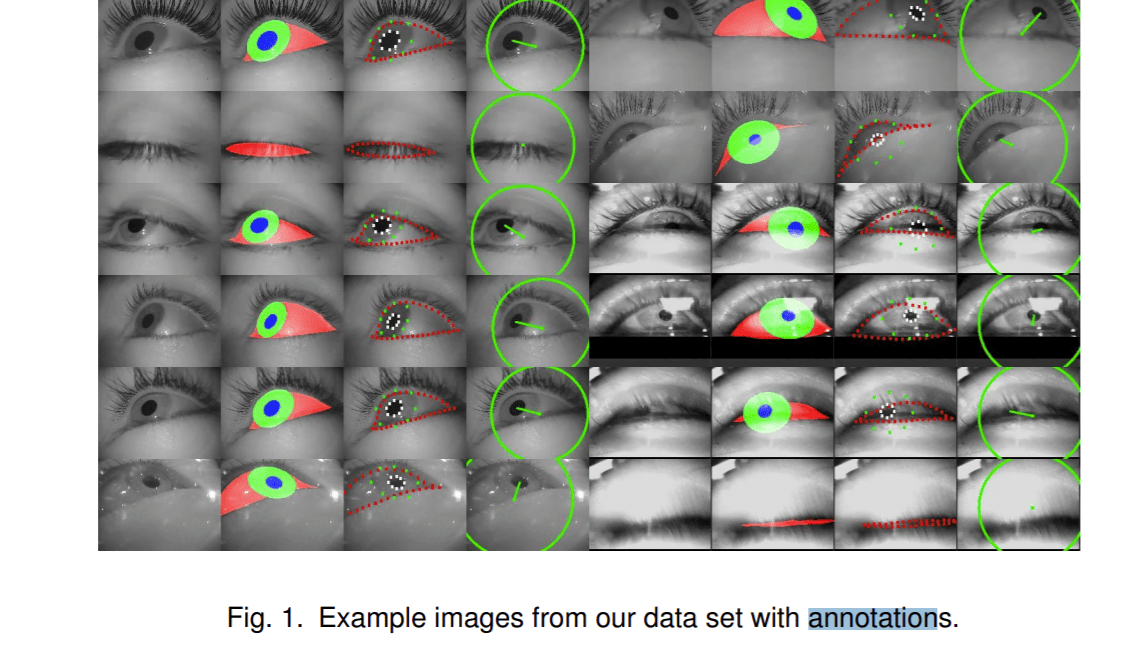

A trio of researchers from Germany’s University Tübingen have introduced a unified dataset of over 20 million human eye images captured using seven different head-mounted eye trackers. Dubbed TEyeD, the dataset is the largest of its kind and includes 2D and 3D annotations on eye movement types. Landmarks and semantic segmentations are provided for the pupils, irises and eyelids to enable shift-invariant gaze estimation.

In the paper TEyeD: Over 20 million real-world eye images with Pupil, Eyelid, and Iris 2D and 3D Segmentations, 2D and 3D Landmarks, 3D Eyeball, Gaze Vector, and Eye Movement Types, the researchers explain their goal in creating the dataset is to provide comprehensive eye-related information for a broad range of scenarios.

The researchers believe human eye movements could revolutionize how we interact with computer systems. The gaze signal for example could reduce the computations of rendering resources for head mounted displays via foveated rendering. Coupling eye movement analysis with modern interactive display technologies such as VR/AR and gaming could also inspire a variety of new applications or enable people with disabilities to better interact with their environments via special hardware devices.

The human eyes dataset also presents potential in other domains — eyelid closure frequency for example can be used to monitor for fatigue, while pupil size can serve as a basis to estimate a person’s cognitive load when given a specific task, etc.

The researchers note that challenges such as different viewing angles and lighting conditions have hindered eye-tracking in real-world applications. Unlike previously published eye datasets built using a single type of eye-tracking device and focused on specific tasks, TEyeD draws from seven different head-mounted eye trackers and two eye trackers integrated into VR or AR devices. This provides broader eye-related information captured with different frequencies and resolutions and across a wide range of tasks such as car driving, driving in a simulator, and various indoor and outdoor activities.

TEyeD includes the NNGaze, LPW, GIW, ElSe, ExCuSe and PNET datasets and new images, all integrated with a rich set of annotations and ground truth 3D landmarks and 3D segmentations. The images from existing datasets and new images together bring the TEyeD total to more than 20 million images — 15 million of which are appearing in a dataset for the first time.

TEyeD is a unique and coherent resource that the team hopes can establish a valuable foundation for further studies in computer vision and boost the performance of eye tracking and gaze estimation in VR and AR applications and beyond.

Data and code for the project are available as a Microsoft cloud shared file, and the paper TEyeD: Over 20 million real-world eye images with Pupil, Eyelid, and Iris 2D and 3D Segmentations, 2D and 3D Landmarks, 3D Eyeball, Gaze Vector, and Eye Movement Types is available on arXiv.

Journalist: Fangyu Cai | Editor: Michael Sarazen

Pingback: [N] The Eyes Have It: 20 Million Images Make TEyeD World’s Largest Human Eye Dataset – ONEO AI

Pingback: [N] The Eyes Have It: 20 Million Images Make TEyeD World’s Largest Human Eye Dataset - Latest News

Regular eye check-ups are crucial for preserving your vision and detecting potential issues early on. Trusting your eye care to experts like eye doctor hackensack nj ensures comprehensive and professional examinations. These regular visits can uncover signs of conditions such as glaucoma, cataracts, or vision changes that might otherwise go unnoticed. By staying proactive and scheduling routine eye appointments, you not only safeguard your eyesight but also promote overall well-being.

I’ve always struggled with constant eye strain and blurry vision, and it felt like nothing could help. Finding the right glasses was a nightmare—either they were too expensive or didn’t fit my needs. One day, I stumbled upon glassesusa reviews while searching for affordable options. Reading real customer experiences gave me the confidence to give them a try. The process was simple, and I finally found glasses that improved my vision and comfort!