Transformer-like neural network architectures have played a game-changing role in natural language processing (NLP), and are now increasingly being applied in the field of Computer Vision (CV) and related research. A paper submitted to ICLR 2021 proposes LambdaNetworks, a new transformer-specific method designed to solve the challenge of expensive attention maps in modeling long-range interactions.

In machine learning, attention mechanisms are a standard method for capturing long-range interactions in data. However, using attention on long-sequence inputs is difficult due to its huge quadratic memory footprint. For example, 32 GB of memory is required to apply a single multi-head attention layer to a batch of 256 of 64×64 input images with 8 heads, which is excessive in practice.

The paper LambdaNetworks: Modeling Long-Range Interactions Without Attention proposes a novel concept called “lambda layers,” a class of layers that provides a general framework for capturing long-range interactions between an input and a structured set of context elements. The paper also introduces “LambdaResNets”, a family architecture based on the layers that reaches SOTA accuracies on ImageNet, and is approximately 4.5x faster than the popular modern machine learning accelerator EfficientNets.

A transformer-like architecture, lambda layers transform available contexts into single linear functions (lambdas), which are then applied to each input separately.

While typical attention mechanisms define a similarity kernel between the input and context elements, lambda layers instead summarize contextual information into a fixed-size linear function, thus avoiding the prohibitive memory requirements. This suggests the applicability of lambda layers for dealing with long sequences or high-resolution images.

In experiments, the research group tested the lambda layers and attention mechanisms on ImageNet classification with a ResNet50 architecture, with the lambda layers showing a strong advantage with just a fraction of the parameter cost.

The lambda layers also deliver better results in both accuracy and memory-efficiency than self-attention alternatives.

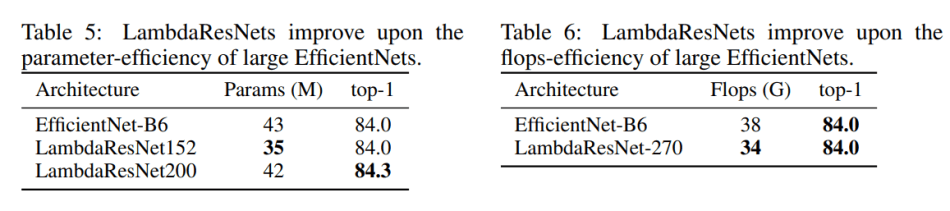

The proposed LambdaResNets family of architectures meanwhile were shown to significantly improve the speed-accuracy tradeoff of image classification models. LambdaResNets performed better on both depth and image scale than the popular EfficientNets, and achieved state-of-art performance on ImageNet accuracy.

The paper LambdaNetworks: Modeling Long-Range Interactions Without Attention is currently under double-blind review by ICLR 2021 and is available on OpenReview. The PyTorch code can be found on the project GitHub.

Analyst: Victor Lu | Editor: Michael Sarazen; Fangyu Cai

Synced Report | A Survey of China’s Artificial Intelligence Solutions in Response to the COVID-19 Pandemic — 87 Case Studies from 700+ AI Vendors

This report offers a look at how China has leveraged artificial intelligence technologies in the battle against COVID-19. It is also available on Amazon Kindle. Along with this report, we also introduced a database covering additional 1428 artificial intelligence solutions from 12 pandemic scenarios.

Click here to find more reports from us.

We know you don’t want to miss any news or research breakthroughs. Subscribe to our popular newsletter Synced Global AI Weekly to get weekly AI updates.

Pingback: [R] ‘Lambda Networks’ Achieve SOTA Accuracy, Save Massive Memory – tensor.io

Nothing cooler than when tech meets science- fascinating read.

Mo Seo | BSR

http://www.buysellram.com