“Lip-sync for your life!” When the RuPaul’s Drag Race host drops his signature challenge, the music swells and a dolled-up drag queen launches into lip sync, keen to slay and stay. The best of the bunch make it look easy — but is it?

While RuPaul may rule the world of showbiz lip-syncing, in the field of computer vision it’s algorithms that do the work. And no, it’s not easy, especially when generating talking-head lip sync to match target speech in a different language. Recently, a team of researchers from the International Institute of Information Technology (IIIT) in Hyderabad, India and the UK’s University of Bath dropped “Wav2Lip,” a novel lip-synchronization model that outperforms current approaches by a large margin in both quantitative metrics and human evaluations.

In the paper A Lip Sync Expert Is All You Need for Speech to Lip Generation In the Wild, the team shows how Wav2Lip generates accurate lip-syncing on video and audio pairs by using a pretrained expert lip-sync discriminator that is accurate in detecting sync in real videos.

Existing lip-sync models can generate reasonably accurate lip movements on a static image or in videos with a specific speaker. For example, the Montreal Institute for Learning Algorithms (MILA) previously introduced ObamaNet, which leverages the massive amount of available video data on the former US President. Such approaches however struggle with arbitrary identities and dynamic and unconstrained talking-head videos, where the generated results often appear to be out-of-sync with the input audio.

The researchers identify two main reasons for this, namely that the loss function for face reconstruction and the discriminator loss are both inadequate for penalizing out-of-sync generation. The team notes that the face reconstruction loss is computed for the whole image, where the important lip region corresponds to less than four percent of the total loss. Consequently, “a lot of surrounding image reconstruction is first optimized before the network starts to perform fine-grained lip shape correction.” Moreover, the lip-sync discriminator used in LipGAN has only reached 56 percent accuracy for detecting out-of-sync audio-lip pairs. The team therefore used a pretrained discriminator that is already 91 percent accurate at detecting lip-sync errors. In this way, the output will focus on matching the audio-lip pairs, instead of on visual artifacts as with current prominent architectures such as LipGAN.

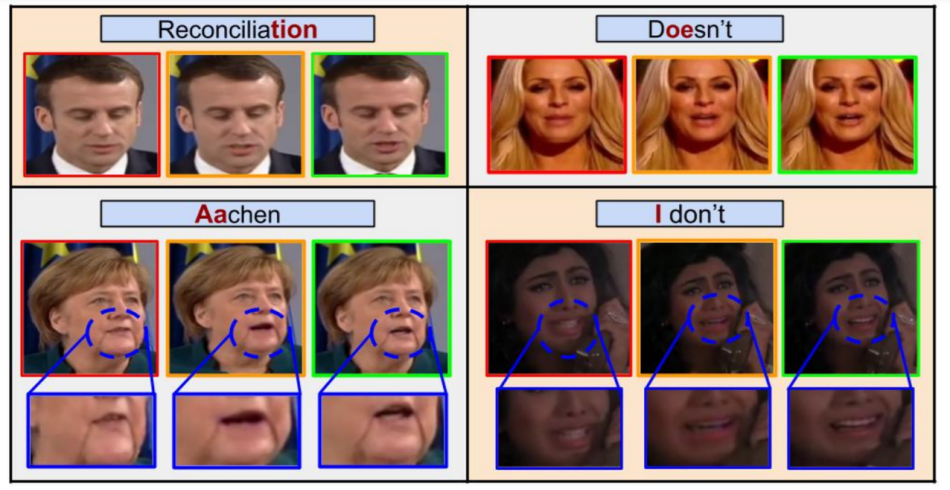

To enable fair and accurate evaluations of lip-synchronization in unconstrained videos, the team created an evaluation framework with new benchmarks and metrics. They amassed a challenging set of unseen, in-the-wild videos from YouTube — ReSyncED — to benchmark lip-sync model performance. In experiments, the novel Wav2Lip model generated realistic talking-head videos with seamless synthetic lip-synchronization, generating more natural lip shapes. Human evaluators preferred the proposed approach’s visual quality over existing methods and unsynced versions in more than 90 percent of instances, while on the new Lip-Sync Error-Distance and Lip-Sync Error-Confidence metrics, the new method’s accuracy wasalmost as good as real synced video.

While the Wav2Lip model may not dazzle like drag queens sashaying down the runway, it may amaze in real-world applications such as movie dubbing, lecture translation and even the cross-cultural lip-syncing of CGI characters.

The paper A Lip Sync Expert Is All You Need for Speech to Lip Generation In The Wild is available on arXiv, and additional interactive demos can be found at the lipsync website.

Reporter: Fangyu Cai | Editor: Michael Sarazen

Synced Report | A Survey of China’s Artificial Intelligence Solutions in Response to the COVID-19 Pandemic — 87 Case Studies from 700+ AI Vendors

This report offers a look at how China has leveraged artificial intelligence technologies in the battle against COVID-19. It is also available on Amazon Kindle. Along with this report, we also introduced a database covering additional 1428 artificial intelligence solutions from 12 pandemic scenarios.

Click here to find more reports from us.

We know you don’t want to miss any story. Subscribe to our popular Synced Global AI Weekly to get weekly AI updates.

0 comments on “IIIT Hyperbad’s ‘Wav2Lip’ Boosts Lip-Sync Video Performance”