Facebook has introduced a model that turns common two-dimensional pictures into 3D photos. The method, first published at this month’s SIGGRAPH 2020 virtual computer graphics conference, transforms single-shot images and works directly on a mobile device. Although this is not a novel technique for today’s advanced smartphones, the proposed system is designed to work even on low-end mobile phones and without an Internet connection.

With a single shot picture as input, the system estimates the depth of the scene and the content of parallax regions using learning-based methods. It does this through four stages:

Stage 1: Depth Estimation. The researchers proposed a new architecture, Tiefenrausch, with three improvements:

- Efficient block structure that is fast on mobile devices

- New network design that balances accuracy, latency, and model size using a neural architecture search algorithm

- Reduced model size and latency through 8-bit quantization

Stage 2: Layer Generation. Depth discontinuities were solved by grouping discontinuities into curve-like features (colour-coded, (a) in the above illustration), and inferring spatial constraints to better shape their growth (dashed lines, see above). The pixels are lifted onto a layered depth image (LDI). The researchers synthesized a new geometry by running an expansion algorithm for 50 iterations to obtain a multi-layered LDI with sufficient overlap for displaying with parallax.

Stage 3: Colour Inpainting. The researchers inpainted on the LDI structure by traversing the connections of LDI pixels to aggregate a local neighbourhood around a pixel, which allowed them to train a network in 2D and then use the pretrained weights for LDI inpainting. They created a new architecture, Farbrausch, to optimize the inpainting network to a mobile-friendly size.

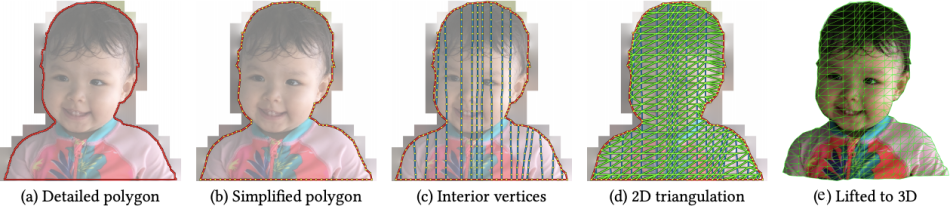

Stage 4: Meshing. A custom algorithm constructs a simplified 3D triangle mesh directly. It exploits the 2.5D structure of representation by operating in the 2D texture atlas domain: simplifying and triangulating the chart polygons first in 2D, then later lifting them to 3D.

Altogether, the processing takes just a few seconds, even on offline low-end mobile devices. In experiments, the method showed comparable performance and accuracy to current state-of-the-art 3D image generation approaches.

The paper One Shot 3D Photography is on arXiv. The code is available on GitHub.

Analyst: Reina Qi Wan | Editor: Michael Sarazen; Fangyu Cai

Synced Report | A Survey of China’s Artificial Intelligence Solutions in Response to the COVID-19 Pandemic — 87 Case Studies from 700+ AI Vendors

This report offers a look at how China has leveraged artificial intelligence technologies in the battle against COVID-19. It is also available on Amazon Kindle. Along with this report, we also introduced a database covering additional 1428 artificial intelligence solutions from 12 pandemic scenarios.

Click here to find more reports from us.

We know you don’t want to miss any story. Subscribe to our popular Synced Global AI Weekly to get weekly AI updates.

Pingback: Facebook One-Shot, On-Device Model Efficiently Transforms Smartphone Pics Into 3D Images | Best Technology Blog

Pingback: Facebook One-Shot, On-Device Model Efficiently Transforms Smartphone Pics Into 3D Images - Synced - Mobile Trends

Pingback: Facebook Proposes Free-Viewpoint Rendering on Monocular Video | Global Research Syndicate

Pingback: Fb Proposes Free-Viewpoint Rendering on Monocular Video | FilmBox

cinsel sohbet chat odaları

hatay sohbet

malatya sohbet

Exciting innovation! Transforming everyday photos into 3D is a game-changer. Kudos to Facebook for pushing the boundaries of on-device modeling!

Painting Services in Richmond Hill ON

Osh University consistently ranks among the best medical universities . With a focus on academic excellence and global recognition, it’s a top choice for aspiring healthcare professionals.

Shalamar Hospital’s dental clinic in Lahore is committed to providing top-notch dental services, combining expertise with a patient-focused approach for your complete satisfaction.