Baidu has released ERNIE (Enhanced Representation through kNowledge IntEgration), a new knowledge integration language representation model which outperforms Google’s state-of-the-art BERT (Bidirectional Encoder Representations from Transformers) in Chinese language tasks including natural language inference, semantic similarity, named entity recognition, sentiment analysis, and question-answer matching.

In recent years unsupervised pre-trained language models have significantly improved efficacy on various natural language processing tasks. Methods like Cove, ELMo, GPT or BERT mainly focus on building models to solve problems based on original language signals instead of semantic units in the text. Unlike BERT, ERNIE features knowledge integration enhancement, which learns semantic relations in the real world through massive data. It directly models prior semantic knowledge units, which enhances the ability to learn semantic representation.

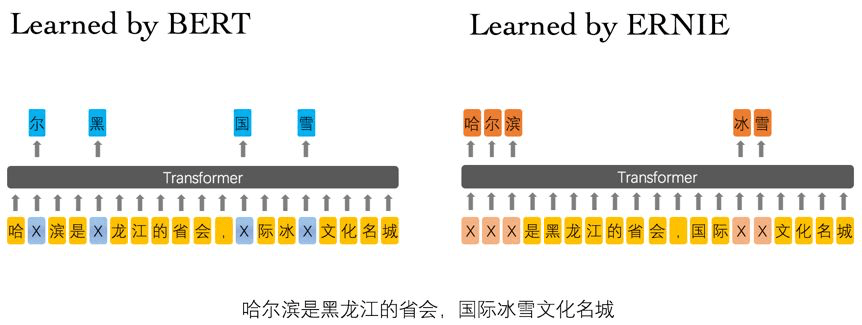

Example:

Harbin is the capital of Heilongjiang Province, an international ice and snow cultural city.

- Learned by BERT :哈 [mask] 滨是 [mask] 龙江的省会,[mask] 际冰 [mask] 文化名城。

- Learned by ERNIE:[mask] [mask] [mask] 是黑龙江的省会,国际 [mask] [mask] 文化名城。

In the example sentence above, BERT can identify the hanzi character “尔(er)” through the local co-occurring characters 哈(ha) and 滨(bin), but the model fails to learn any knowledge related to the word “Harbin (哈尔滨)”. ERNIE however can extrapolate the relationship between Harbin (哈尔滨) and Heilongjiang (黑龙江) by analyzing implicit knowledge of words and entities, and infer that Harbin is the capital of Heilongjiang (黑龙江) Province in China and also a city with ice and snow.

As a character-based model, ERNIE comprehends and learns the meaning and semantic relationships between words, which provides greater versatility and scalability compare to BERT. ERNIE is trained on multi-source data and knowledge collected from encyclopedia articles, news, and forum dialogues, which improves its performance in context-based knowledge reasoning.

Baidu researchers believe this technological breakthrough can be applied to various products and scenarios. Building on ERNIE, the team plans to conduct research on integrating knowledge into pre-training semantic representation models, such as using syntactic parsing or weak supervised signals from other tasks, or validating this idea in other languages.

Baidu named its language model based on BERT’s Muppet pal in the popular US children’s television show Sesame Street. ERNIE’s code and model running on PaddlePaddle are now open-sourced on GitHub.

Author: Herin Zhao | Editor: Michael Sarazen

Pingback: Baidu’s ERNIE Tops Google’s BERT in Chinese NLP Tasks |