DeepMind’s 2018 AlphaGo Zero requires 300,000 times more computing power than AlexNet did in 2013. With larger-than-ever datasets and demanding high precision models to deal with, Deep Learning (DL) algorithms are understandably hungry for new hardware solutions.

On July 9th Professor Yoshua Bengio spoke on Computing Hardware for Emerging Intelligent Sensory Applications at the University of Toronto’s Bahen Center, as part of the Natural Sciences and Engineering Research Council of Canada (NSERC) Strategic Network talk series. Professor Bengio is a world-renown AI researcher and head of the Montreal Institute for Learning Algorithms.

Bengio introduced three conceptual approaches to hardware-friendly deep learning:

- Quantized Neural Networks (QNN)

- Equilibrium Propagation

- Cultural Evolution

Quantized Neural Networks (QNN)

Previous research has focused on quantizing information to obtain low-precision neural networks. Bengio’s team also attempted to minimize precision for training and inference, and found that when training neural networks with reduced precision the model learns to adapt, which can reduce the neural network’s activation values and weights from 32 or 16 bits to 1, 2, or 3 bits of precision.

The team quantized the feed-forward pass to produce a neural network that allows inference on low precision parameters at the end of training. High precision training is however still required to compute gradient descent and backpropagation. Gradient descent computation is a slow iterative process in that the algorithm gradually changes the value of weights through each iteration. If for instance, the neural network needed to gradually change binary weights, this process would require high precision to go from -1 to 1.

Once training is complete, the system gets rid of high precision and keeps only the sign extension. The system will also experience some loss when moving to low precision within the same architecture, thus introducing a trade-off between computation and precision.

Equilibrium Propagation

Equilibrium Propagation is a framework that allows Energy-Based Models (EBM) to perform learning in a way analogous to backpropagation. The term “Equilibrium” is inspired by real-world physical systems’ convergence to a resting state with zero net force. Bengio believes this is a riskier research direction, with less funding and researchers, but could influence the design of analog computing systems.

EBMs at a Glance

EBMs are dynamic physical systems that have energy — i.e. kinetic energy — moving from high to low energy configurations under the laws of thermodynamics. A neural network designed using the same principles has an energy function that governs how configurations evolve from high to low over time. Learning, in this case, is when the energy function configurations select the correct answer with low energy usage.

EBM systems are described by differential equations rather than some defined error function, making them unsuitable for backpropagation or graph-based inference. The question is: How to calculate a cost function that measures how well the physical system behaves? To find the answer, we can think of such energy functions under the assumption that physical system dynamics will converge to local minima.

EBMs Optimization

Bengio shows how to define and derive gradients for training EBMs. This is broken down into two parts based on the energy function: 1) Internal potential that governs how different neurons interact with each other; 2) External potential that defines the influence of the external world on the neurons.

Since training performs optimization, the optimization for the evolution of EBM states will be essentially the gradient descent of the energy. An explanation of this concept is shown in Figure 3. Each neuron will have two terms corresponding to the internal and external potential.

Training has two phases:

- Free Phase — the network attempts to converge to a fixed point and read predictions at the output.

- Weakly Clamped Phase — includes “nudging” the output towards the target (the target output corresponding to the input), propagation of error signals, and finding another fixed point.

These two phases are analogous to the forward and reverse passes in standard backpropagation.

EBMs in Practice

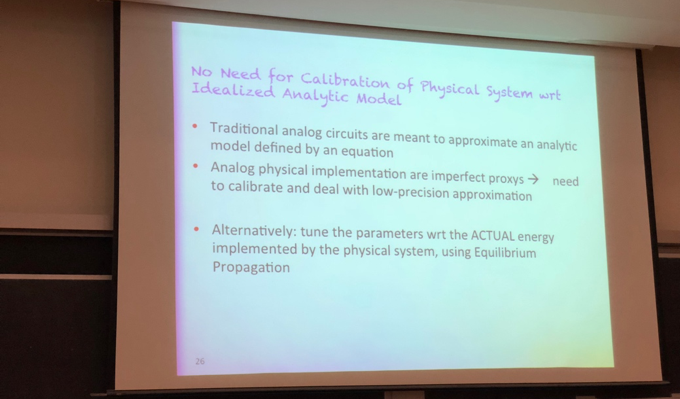

So what role does an energy-based system play in building analog hardware? A digital system is an approximation of analog parts. In order for the digital system to function effectively its components must operate according to some ideal. Modeling the transistors according to the V-C characteristic curve, for instance, will create high energy requirements for maintaining analog components’ operation in the correct regime.

A problem with building analog hardware circuits is that devices behave differently and not exactly according to the idealized schema. In addition, noise becomes an issue if the circuit is too small.

EBMs do not attempt to quantize noisy signals. Instead, they allow the system to treat its components as a whole. Since no calibration is required for such systems, the user can process the overall output of the circuit without focusing on the outputs of individual components, as shown in Figure 4. The overall output value corresponds to the metric of the user’s interest — in this case, energy.

Cultural Evolution

Bengio closed his talk by shifting from the brain neuron level up to the level of humans in society.

One question regarding hardware in deep learning is whether researchers can take advantage of parallel computation. It is widely known that GPUs work so well in AI research because they parallelize computations better than CPUs. As scientists build larger and more complex systems, the energy required for communication between different parts of the circuit becomes a bottleneck. This is most evident in GPUs and CPUs, when the chip must transfer large amounts of data from memory into the rest of the chip.

As shown in Figure 5, the state-of-the-art distributed training method isSynchronized Stochastic Gradient Descent (SGD). This simple distributed algorithm has a set of nodes, each with their own trained neural network. The nodes share information such as gradients and weights through a single server which broadcasts to the other nodes.

This type of information sharing becomes an issue when it comes to scaling the number of nodes. Considering the expanding dimensions of today’s systems, nodes must communicate a huge amount of information. How do we tackle this scalability problem? A potential solution proposed by Bengio is “Cultural Evolution.”

Cultural Evolution is a distributed training method for efficient communication, inspired by how human social networks develop and share new concepts through language.

We can consider the human brain as a collection of nodes with a huge number of synaptic weights. The way to synchronize two brains however, is not by sharing their weights, but by communicating ideas. How can this be achieved? By sharing “representations,” which are discrete summaries of activities in the nodes/neurons.

Hinton et al. explored synchronizing two networks by communicating their activations instead of weights. Their idea was that given two networks with different weights, the two networks would try to synchronize the functions which they are computing (instead of weights). A human example would be that even though A’s brain works differently than B’s brain, as long as both brains understand the same concepts, thay have a shared context which allows them to collaborate.

This approach is based on an older idea: training a single network that summarizes the knowledge of multiple networks. This is done is by using the outputs from the “teaching” networks as “targets” for the network in training. The difference with Cultural Evolution is that both networks are learning at the same time.

Bengio’s team experimented with these concepts and observed that in addition to the network’s own data targets, sharing the output between the networks as additional targets can greatly improve the training of the group of networks. Figure 5 gives a brief summary of Cultural Evolution:

Conclusion

Researchers are eager to find the most efficient way to develop deep-learning hardware for wider adoption and deployment. Earlier this month Synced published an article on Google AI chief Jeff Dean’s ML system architecture blueprint, detailing frontier research at Google and Facebook and diverging approaches to hardware optimization for ML. The AI community can only benefit if such multifarious approaches to hardware solutions provide useful inspirations for near-future research.

Author: Joshua Chou| Tech Editor: Hao Wang | Editor: Meghan Han Michael Sarazen

Pingback: Data Science newsletter – August 2, 2018 | Sports.BradStenger.com