Abstract

“There is no AI without robotics,” argued the author, Jean-Christophe Baillie, founder and president of Novaquark. In this article, he addresses what AI is, and why AlphaGo is not AI.

What is AI and What is not AI?

There is no doubt that AlphaGo, the Go-playing artificial intelligence designed by Google DeepMind is a smart system. By beating world champion Lee Sedol, similar deep learning approaches have been applied to solve difficult computational problems in every industry. Thanks to AlphaGo, the term “Artificial Intelligence” (AI) came under the spotlight (again). However, the author doesn’t agree that AlphaGo is AI, for the reason that it’s not able to get us to full AI – an Artificial General Intelligence (AGI). To build an AGI, one of the key issues is that it avoids being limited by the designer. It will make sense of the world for itself. It develops its own internal meaning from everything it encounters, hears, says, and does, just like humans. On the contrary, today’s AI programs basically don’t understand what is going on and cannot handle issues in other domains. So what is AI? This is perhaps the most fundamental problem of AI.

In 1990, cognitive scientist Stevan Harnad expressed the problem of meaning in his paper “The Symbol Grounding Problem”[1] – the grounding of whatever representation exists inside the system into the real world outside. For example, suppose you had to learn Chinese as a second language, and the only source of information you had was a Chinese to Chinese dictionary. The trip through the dictionary would amount to a merry-go-round, passing endlessly from one meaningless symbol or symbol-string (the definientes) to another (the definienda), never coming to a halt on what anything meant. How can you ever get off the symbol/symbol merry-go-round? How is symbol meaning to be grounded in something other than just more meaningless symbols? This is a typical symbol grounding problem. The problem of AI’s meaning has been proposed decades ago, but until this day, it still has no solution.

The problem of AI’s meaning can be divided into four sub-problems which confused us:

1. How to structure the information the agent (human or AI) is receiving from the world?

This is the first problem of AI’s meaning, which is about structuring information. With the rapid growth of machine learning, especially deep learning and unsupervised learning, this problem is well addressed in recent years. Tremendous progress, including AlphaGo, has been made partly because of the advancement in GPU technologies, which are quite good at processing information.

What these effective algorithms such as deep learning do, is express redundant and unreadable data in a high dimensional space with the most useful information.

For today’s AI, supervised learning is definitely the most deployed and successful method in application. Naive Bayes Classification, Logistic Regression and Support Vector Machine have generated billions of dollars in value every year. But the aforementioned unsupervised learning is growing fast. Clustering and Principle Component Analysis solved many issues that supervised learning cannot solve. What’s more, semi-supervised learning and reinforcement learning are becoming more and more widely used in businesses.

Although there are many useful and powerful algorithms to solve different AI issues, there is no one general AI that can be applied to every condition, and no one knows which of them can help build the general-purpose AI. From my point of view, deep neural network with unsupervised learning will provide the most help in realizing the dream. For example, IBM’s Watson combines many algorithms such that it can deal with various kinds of data. However, many researchers believe there is no possibility to build the full AI without cognitive psychology and neuroscience. To make the AGI dream come true, there is still a long way to go.

2. How to link this structured information to the world, or, taking the above definition, how to build “meaning” for the agent?

After structuring information, the second problem is about linking the structured information to the real world and endowing fundamental meaning to robotics. The premise of interacting with the world is having a body, so there is no AI without robotics. This realization is often called the “embodiment problem”. Most AI researchers now agree that embodiment is as essential as intelligence to AI. From the real world, especially the animal kingdom, we can see that every different body has a different form of intelligence.

The embodiment starts with making sense of the body parts and controlling them to produce desired effects in the observed world, and then building its own notion of the world. This process is called “sensorimotor theory” which has been studied by researchers like J. Kevin O’Regan.

3. How to synchronize this meaning with other agents?

This problem relates with the origin of culture. Unlike us human, some animals show limited and simple form of culture by means of dance and smell, etc. Without culture, the essential catalyst of intelligence, AI would be nothing more than an academic curiosity.

However, culture is a learning process that involves psychology and cognitive ability. It’s not something that can be hand coded into a machine. By studying how children acquire cultural competencies, researchers are trying to understand this process.

The process is also closely linked to language learning, which is an evolutionary process: by interacting with the world, the agents acquire new information and create new meaning by which they communicate with other agents and select the most successful structures that help them communicate. After learning from mistakes made in hundreds of trials, the best system can eventually be built . This is something that deep learning cannot explain. Some research labs, such as SoftBank Robotics, are going further into acquiring complex cultural conventions by using this process.

4. Why does the agent do something at all rather than nothing? How to set all this into motion?

The last problem is about desire. The agents do something because of “intrinsic motivation”, like how humans don’t just satisfy survival needs, but explore further, driven by some kind of intrinsic curiosity. Researchers like Pierre-Yves Oudeyer have shown that simple mathematical formulations are enough to account for complex and surprising behaviors, such as the agent maximizing its rate of learning as “curiosity”.

Limitations of Current AIs

According to the author’s understanding, currently there is no AI in the world, even including the most used and well-known AI services and applications. Although his view does not represent the general thinking, current AIs do have their limitations.

Siri

The most popular AI by Apple cannot recognize what you are saying if your sentence is outside the range of its task domains.

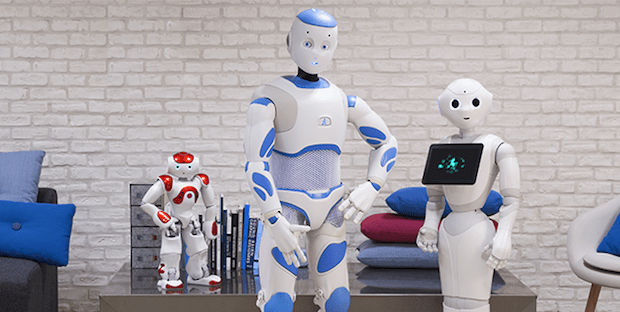

Pepper

The most famous AI robot by SoftBank has the same limitation as Siri. What’s more, even though it is equipped with speech emotion recognition system, it sometimes cannot tell the true emotion, and can be easily fooled.

alipay

E-commerce giant Alibaba Group and affiliated online payment service Alipay are aiming to use facial recognition technology to take the place of passwords. The accuracy is satisfactory, but it cannot distinguish twins who have very similar faces.

These examples reflect the fact that current AIs are not smart enough. They cannot even process the data they receive from the world very well, let alone interacting with the world.

Summary

It’s happy to see the rapid advances of deep learning and the great success of AlphaGo, because they could lead to lots of useful applications in medical research, environmental preservation, and many other areas. However, deep learning is not the silver bullet that will lead to the true AI. True AI is an intelligence that is capable of learning from the world, to interact naturally with us and understand the complexity of our emotions, intentions and cultural biases, and ultimately help us to make a better world.

References

[1]. Harnad S. The symbol grounding problem[J]. Physica D: Nonlinear Phenomena, 1990, 42(1-3): 335-346.

Author: Yuanchao Li | Localized by Synced Global Team: Hao Wang

I see you don’t monetize your page, but you can earn additional bucks every day.

It’s very easy even for noobs, if you are interested simply search in gooogle: pandatsor’s tools