Tesla has begun rolling out its FSD (“Full Self-Driving”) Beta for select customers in the United States. The release is an upgrade from the existing FSD software and has wowed Tesla fans with new automatic route navigation capabilities such as exiting highways, selecting road forks, manoeuvring around objects and other vehicles, and making turns.

Although Tesla CEO Elon Musk emphasized the limited FSD beta version release will be done slowly and with extreme caution, it has already raised concerns and triggered spirited discussions in the self-driving community and across social media. Some have argued that FSD isn’t fully autonomous driving at all, while others have tweeted user test videos showing drivers having to take over the vehicle to question whether the FSD feature should be engaged on public roads at an early development stage.

Synced has seen screenshots of the software upgrade and release posted on Reddit, in which Tesla warns users of the FSD’s potential dangers in no uncertain terms. “It (FSD Beta) may do the wrong thing at the worst time, so you must always keep your hands on the wheel and attention to the road. Do not become complacent.”

On the “full self-driving” issue, Tesla’s FSD webpage seems to roll back the claim: “The currently enabled features require active driver supervision and do not make the vehicle autonomous.” This should not be surprising, as there are no fully self-driving cars in the US. “Every vehicle currently for sale in the United States requires the full attention of the driver at all times for safe operation,” explains the National Highway Traffic Safety Administration (NHTSA).

The NHTSA and the autonomous vehicle industry have largely adopted the six levels of autonomy classification scheme proposed by the Society of Automotive Engineers in 2016 for self-driving vehicles, where “0” is no automation and “5” is total automation. While Tesla operates at a comfortable L2, popular Twitter account “Tesla Owners Online” responded to the FSD Beta release with the claim the vehicles have “basically broken into level 3 autonomy now.” Is that true? “No,” says Alex Roy, Director of Special Operations for self-driving startup Argo AI.

As NHTSA specifies in the publication Human Factors Evaluation of Level 2 And Level 3 Automated Driving Concepts, “the major distinction between L2 and L3 is that at L3, the vehicle is designed so that the driver is not expected to constantly monitor the roadway while driving.“

Oliver Cameron, co-founder and CEO of self-driving startup Voyage, believes Tesla’s FSD Beta is polarizing the autonomous driving community for a couple of unique reasons: “no use of pre-recorded HD maps” and “perceiving the world with cameras (no lidar!)” The second point echoes the concerns of many — although most self-driving approaches have employed lidar as a central sensing component, Tesla has shunned it from the start.

Tesla further advises drivers in the release note, “use Full Self-driving in limited Beta only if you will pay constant attention to the road, and be prepared to act immediately, especially around blind corners, intersections, and in narrow driving situations.” Independent testers have posted videos where they encounter situations such as the vehicle proceeding at an unprotected junction with approaching cross-traffic. Cameron responded to the videos, “given FSD’s ‘beta’ designation, these sorts of issues are to be expected. However, the clips above were taken from only 7 minutes of driving. Seeing these types of issue, with that frequency, gives me pause that this system is ready for fully self-driving anytime soon.”

Cameron meanwhile sees benefits in Tesla’s mapping approach: “By generating a map on the fly, instead of pre-loading one recorded earlier, FSD can theoretically drive anywhere. Realizing cost-savings because of fewer sensor modalities.”

He also applauds FSD Beta’s enhanced traffic light detection, explaining “without encoding positions in a pre-recorded HD map, [traffic light detection] is inherently a data-driven problem. From the small amount of clips I’ve seen, FSD is able to accurately detect not just traffic light state, but the relevance of traffic lights to each lane. Nice!”

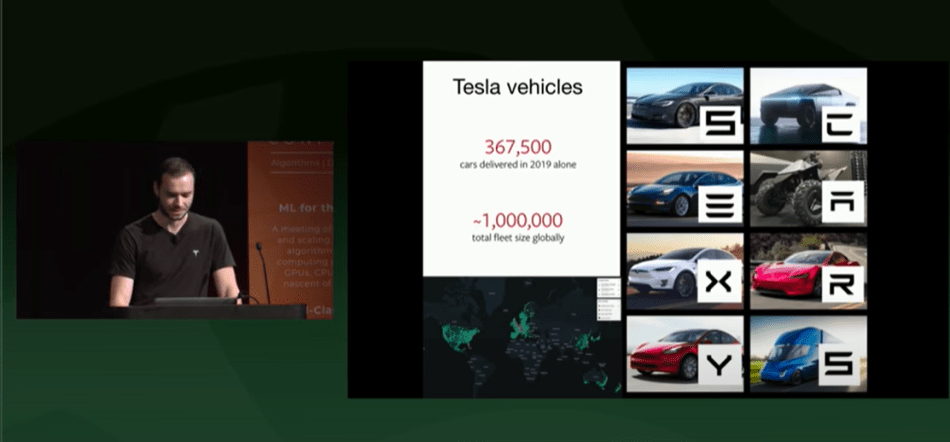

Tesla doesn’t rely on an internal test fleet or simulations to collect data, instead it leverages the hundreds of thousands of sensor-equipped Teslas on the road to produce the data used to improve features such as Autopilot and FSD. In April at the 5th Annual Scaled Machine Learning Conference 2020, Tesla’s Head of AI Andrej Karpathy announced that total miles driven with Tesla Autopilot had reached 3 billion (4,828,032,000 km).

“Their data advantage helps, but given this starting point, it is unclear if it is meaningful.” Cameron argues.

New York University Professor of Psychology and occasional ML sceptic Gary Marcus tweeted that ongoing massive data collection efforts in self-driving have “not this far yielded the fruit many expected” and that the less-than-perfect performance of the FDS Beta presents a fundamental question: “Can the big data-heavy techniques that have gotten self-driving this far carry us to the finish line, or do we need further innovations to handle the multiplicity of edge cases?”

Nobody knows.

But one thing is certain — big tech self-driving research will continue, and will continue to cost money. Tesla owners will be contributing another US$2,000 starting this Thursday, when the FSD Beta upgrade will push the Autopilot/FSD price up to about US$10,000. Elon Musk tweeted that FSD monthly rentals will be made available sometime next year.

Reporter: Fangyu Cai | Editor: Michael Sarazen

Synced Report | A Survey of China’s Artificial Intelligence Solutions in Response to the COVID-19 Pandemic — 87 Case Studies from 700+ AI Vendors

This report offers a look at how China has leveraged artificial intelligence technologies in the battle against COVID-19. It is also available on Amazon Kindle. Along with this report, we also introduced a database covering additional 1428 artificial intelligence solutions from 12 pandemic scenarios.

Click here to find more reports from us.

We know you don’t want to miss any news or research breakthroughs. Subscribe to our popular newsletter Synced Global AI Weekly to get weekly AI updates.

Pingback: Tesla Rolls Out ‘Full Self-Driving’ Beta; Critics Apply the Brakes - GistTree

Pingback: [D] Tesla Rolls Out ‘Full Self-Driving’ Beta; Critics Apply the Brakes – tensor.io

Pingback: Tesla Rolls Out 'Full Self-Driving' Beta; Critics Apply the Brakes - Tesla News

very good

thanks for the last information

thanks for the last information

nice topic

thank you for sharing this article post.

thanks, for all information you share

I need to to thank you for this wonderful

post!

thank you for sharing this article post.

.Thanks for sharing the info, keep up the good work going…. I really enjoyed exploring

your site. good resource

GTU