Beijing-based computer vision unicorn Megvii Technology runs the world’s largest face-recognition technology platform, Face++. The company provides innovative solutions for object detection and image recognition using AI-powered techniques.

This week, Megvii (Face++) Chief Scientist Dr. Jian Sun and his research team will present multiple projects at the European Conference on Computer Vision (ECCV) 2018, one of the world’s top three international image processing and computer vision gatherings.

AI has done an impressive job solving visual recognition problems independently, but still lacks the human ability to visualize abundant information at a glance. For example, when a human looks at a living room they can easily parse concepts at multiple perceptual levels, e.g., scene, objects, parts, textures, materials, as well as the compositional structures linking detected concepts. Megvii defines this ability as Unified Perceptual Parsing (UPP). Accomplishing UPP with a learning framework called “UPerNet” is the subject of the recent paper Unified Perceptual Parsing for Scene Understanding by Dr. Sun et al., and one of the projects that will be presented at the ECCV.

The research team’s first challenge was creating a high-quality training dataset, which is the foundation of a learning network. No single existing image dataset could provide all the levels of visual information required for UPP, so the authors merged and standardized various labeled image datasets for specific tasks: ADE20K; Pascal-Context and Pascal-Part for scene, object and part parsing; OpenSurfaces for material and surface recognition; and the Describable Textures Dataset (DTD) for texture recognition. The result was the working dataset Broden+, with 57,095 images for model training.

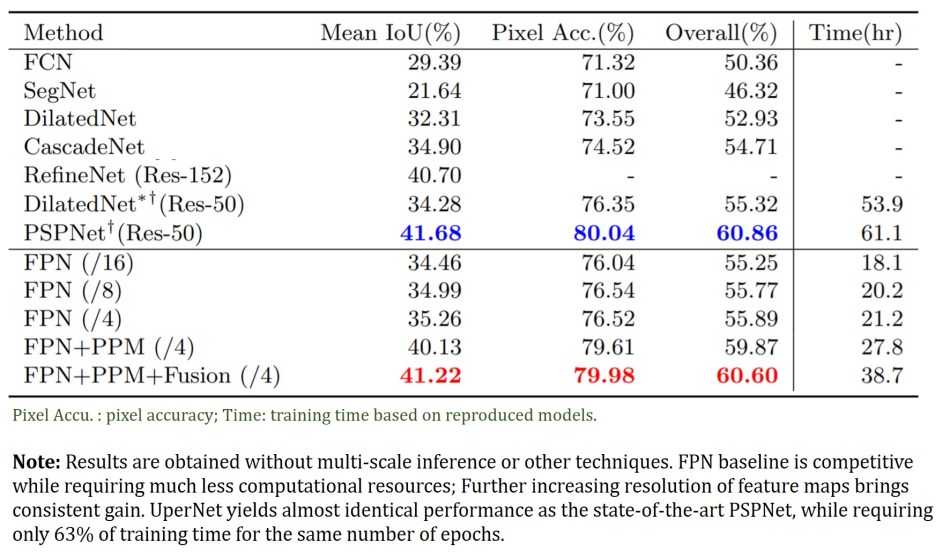

The authors overcame the annotation heterogeneity challenge (e.g., some annotations are image-level while some are pixel-level) by designing a multi-task framework to detect various visual concepts simultaneously. UPerNet was designed based on a Feature Pyramid Network (FPN), which exploits a top-down architecture to extract multi-level feature representations in an inherent and pyramidal hierarchy.

Because the FPN has an insufficient empirical receptive field, a Pyramid Pooling Module (PPM) is applied to the last layer of the backbone network before feeding it to the FPN top-down branch. In addition, a fusion of FPN feature maps is used in the object and part annotations for model performance enhancement.

A total of 36,500 images from 365 scenes in the Places-365 dataset were used for model validation. Both quantitative and qualitative results suggest UperNet is effective for unifying multi-level visual attributes simultaneously and has competitive model performance and training time requirement compared with current state-of-art methods.

UPerNet is also capable of exploring deeper understanding in a scene by identifying multi-compositional information such as scene-object, object/part-material, and material-texture relations from input images. Research results indicate the extracted information is reasonable and matches human understanding of compositional relations between these concepts.

The paper Unified Perceptual Parsing for Scene Understanding was published on arXiv in July. Related open source code is available at GitHub.

Source: Synced China

Localization: Tingting Cao | Editor: Michael Sarazen

Unlike traditional racing games that prioritize speed, Drive Mad demands strategic thinking and careful control to reach the finish line.

slope is the exciting run through galaxy.

Incredibox Sprunki is a creative mod of the classic Incredibox game, blending Sprunki’s unique style with interactive beat-making. It offers fresh sounds and visuals, perfect for fans looking to remix their musical experience.

Colorbox Mustard is an interactive, browser-based music creation platform that lets you mix beats using unique characters. With no downloads required, it offers an easy, fun way to create custom soundscapes, complete with hidden achievements and vibrant mustard-themed visuals. Perfect for both beginners and pros, dive into music creation instantly on https://colorbox-mustard.com/.

Capybara Go is an adventure RPG like no other. Play with cute and furry capybaras in a roguelike adventure, fight against various creatures, and improve their skills after every fight.You can experience it online at capybarago.app

Sprunki is a music creation game inspired by Incredibox. You can experience it online at (sprunki.news) where you can create unique beats, mix melodies, and unleash your inner musician through fun characters and dynamic soundscapes.sprunki.news

30 minute timer is an online timer tool designed to help users set and manage their time. It features a countdown function, allowing you to set it for 30 minutes or any other duration you need. It’s perfect for various activities like workouts, cooking, studying, or taking work breaks. The tool has a simple, user-friendly interface and doesn’t require any downloads or registration—just open it and start using it. It’s a handy little helper for boosting your time management!

Sprunki Phase 3’s graphics and sound features are meticulously designed, with sharp images and vivid sounds, creating an engaging experience from the very first moments, making players feel like they are lost in a magical world.

sprunki phase 3 is not only a music game but also a journey to explore art and creativity in a mysterious and magical world. The game calls on players to explore, create and share their musical works with the community, thereby creating memorable connections and memories.

Golf Orbit is a fun golf game with sharp graphics where players overcome bizarre obstacles to complete levels, earn points and conquer tournaments. This game is both entertaining and challenges the player’s skills and tactics.

Golf Orbit is a fun golf game with sharp graphics where players overcome bizarre obstacles to complete levels, earn points and conquer tournaments. This game is both entertaining and challenges the player’s skills and tactics.

Been stuck on character development for my novel until a writer friend shared this niche tool. It throws out wild combinations like ‘a librarian who secretly races snails’ – instant inspiration! The creator apparently built it during lockdown to cure creative block. No login, no ads, just pure randomness. Perfect for DMs needing quick NPC quirks or writers battling blank pages.1-4 random number generator

1-4 random number generatorBeen stuck on character development for my novel until a writer friend shared this niche tool. It throws out wild combinations like ‘a librarian who secretly races snails’ – instant inspiration! The creator apparently built it during lockdown to cure creative block. No login, no ads, just pure randomness. Perfect for DMs needing quick NPC quirks or writers battling blank pages.

October is finally here!

Your writing style and the way you have presented your content is awesome.

For my robotics final, I programmed a drone using Mario Kart-World’s anti-gravity physics data from Mario Kart World. When the professor saw Bowser’s Castle trajectory algorithms controlling flight paths, he muttered ‘I need to call my therapist’.

Grandpa bet he could beat Mario Kart-World using his 1989 Game Boy controller. Mario Kart World’s adapter guide made it work – now he’s destroying online races with vintage D-pad drifts. Twitch chat is calling him ‘The Boomer Blue Shell’…

Spooked myself in Mario Kart-World’s haunted track – drove through a cemetery gate at 2:17 AM and glimpsed a beta model of Waluigi’s unlaunched UFO kart! Mario Kart World ‘s leaks from 2019 actually predicted this… Now I’m triple-checking every shadow.

For my robotics final, I programmed a drone using Mario Kart-World’s anti-gravity physics data from Mario Kart World. When the professor saw Bowser’s Castle trajectory algorithms controlling flight paths, he muttered ‘I need to call my therapist’.

Plugged my 1993 SNES controller into Mario Kart-World using Mario Kart World ‘s adapter hack. The D-pad actually works better on neon cyber tracks than analog sticks! Twitch chat says I look like a grandma typing on a typewriter…

Wow, UPerNet’s ability to understand scenes at multiple levels is mind-blowing! It’s like having computer vision that sees things the way we do. Speaking of measuring things accurately, I’ve been using Board Foot Calculator for my woodworking projects – super helpful tool. Back to UPerNet though, the way it handles both objects and textures is really impressive!

Hey there! It’s fascinating to see how Megvii is pushing the boundaries in face-recognition tech. Really impressive stuff! Speaking of innovations, if you’re interested in cool design elements for stationery or lifestyle items, you might wanna check out this resource on this resource on trendy stationery and lifestyle items.

Wow, the advancements in AI are just mind-blowing! Megvii seems to have a solid grip on visual AI techniques. If you’re diving into design, that Pantone Colors Chart is super handy for getting those color codes just right—definitely worth checking out the Pantone Colors Chart for getting those color codes.

It’s amazing how companies like Megvii are changing our world with tech. They’re really paving the way for future innovations! By the way, if you ever want to play around with colors in your images, you might find this AI color changer quite useful—take a look at this AI color changer quite useful!

escape road 2: thank, i like it!

I struggled to find time to read, so I started setting a 5 minute timer each night. It’s a small, manageable goal that often turns into a longer reading session. It’s amazing how many pages you can get through in just five focused minutes.

This is a game-changer for casual creators! Especially when dealing with AI-generated assets, sometimes we need to clean up the output. For video creators, I’ve found this Text Remover quite handy for removing unwanted watermarks or text overlays. Thanks for the thorough discussion!c

Really fascinating read! The idea of Unified Perceptual Parsing and how UPerNet can understand scenes at multiple levels—objects, parts, textures, and materials all at once—is impressive. It’s a big step toward making computer vision more human-like in how it interprets visual information. Thanks for breaking down such a complex concept so clearly!