UC Berkley Professor David Wagner delivered a keynote speech to open the ACM Conference on Computer and Communications Security (CCS) 2017. The top tier computer security and privacy conference was held in Dallas, USA. Prof. Wagner joked this was a “kindergarten level introduction” to adversarial machine learning, covering topics such as model evasion, model extraction, model inversion, differential privacy and data pollution attack.

Machine Learning Models are Vulnerable

Instead of starting by explaining what adversarial machine learning is, Prof. Wagner showed multiple sets of images that looked the same but were predicted with incorrect labels.

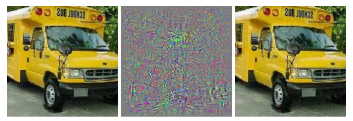

Figure 1. An image of bus recognized as hummingbird after adding some noise (Szegedy 2014)

For example, in the figure above, an image of a bus (left) was perturbed by adding noise (middle) to form a new image (right) which looks the same but is then detected as a hummingbird by the model.

After showing these adversarial examples, Prof. Wagner explained how they were generated, comparing the process to solving an optimization problem:

Figure 2. Finding adversarial examples as an optimization problem (Carlini 2017)

Here x is the original input and delta stands for the perturbation added to x. The goal is to find a new input x+delta that labeled as target label t by the model, which is different from the original label. At the same time, we need to minimize the distance between the original input and the perturbed new input.

Further, if we can find a proper loss function to describe the optimization problem, gradient descent methods can be then applied to find the solutions.

After showing how to find such adversarial examples, an audience member asked how to attack models without knowing the models’ parameters. As in the previous examples mentioned, loss function of the original model is used to solve the optimization problem which makes it a white-box attack. However, in the real world, a black-box attack where the model is not known by the adversaries is a more common situation.

Prof. Wagner then introduced transferability of machine learning models: some examples can be predicted the same regardless of model parameters or even different algorithms (i.e. neuron networks, SVM, decision tree). Therefore, attackers can train a local model and find adversarial examples for it first, then transfer those examples to a black-box model to complete the attack.

How can we defend against such attacks? Prof. Wagner identified several possible solutions — such as protecting the parameters, adding randomness and making it non-differential — but none of these works due to transferability. “No known effective defense exists,” he said, explaining that we can only improve the robustness against adversarial examples with adversarial retraining. The state-of-the-art paper on the subject will be published in July 2018 in conjunction with the ICML 2018 in Stockholm.

Model Extraction

After showing how machine learning models might be misled by crafted inputs, Prof. Wagner introduced another problem called model extraction. As machine learning models are trained using massive of data and resources, the model itself becomes an expensive property for its owners. However, recent research has shown that an adversary can “steal” the model by simply querying it (Tramer usenix 16). In 2017 researchers improved model extraction performance issues by reducing the query numbers (Papernot AsiaCCS 17). Besides being used to steal models, model extraction also facilitates black-box attacks since it learns a substitute model by just querying the black-box model. Attackers can use this learned substitute model as a white model instead of training a new one which can be difficult due to dataset limitations. There is no existing defense against model extraction either.

Figure 3. Substitute DNN training algorithm with dataset augmentation (Papernot Asia CCS 2017).

Model Inversion and Differential Privacy

Machine learning models also have privacy vulnerabilities. Research has shown that training data can be reconstructed from the model. The principle behind model inversion is simple: using features synthesized from the model to generate an input that maximizes the likelihood of being predicted with a certain label. This could be critical to privacy-sensitive data such as medical entries and government classified information.

Figure 4. Reconstructed image of training data from the model (Fredrikson 2015)

Besides model inversion, differential privacy is another topic related to privacy vulnerability of machine learning models. More efforts have been put into this problem, which could be defended against by adding noise in gradient descent or partitioning the data during the training process.

Figure 5. Defense against differential privacy through partitioning the training process.

Figure 5. Defense against differential privacy through partitioning the training process.

Training Data Pollution

Due to the huge volume of data required to train deep learning models, crowd-sourcing is necessary. This creates a new attack surface adversaries can use to pollute the training dataset. Researchers have found that by injecting polluted data into the training data, it is possible to affect accuracy and even insert a backdoor in the learning model.

Discussion and Conclusion

A discussion on safety issues regarding adversarial machine learning was canceled due to time issues.

In the Q&A, the author of DolphinAttack, Wenyuan Xu asked whether it would be possible to eliminate all the adversarial examples since these seem to be a property of the machine learning model itself. Prof. Wagner did not answer in the affirmative, leaving an open question for the community to consider.

References

Intriguing properties of neural networks

Christian Szegedy, Wojciech Zaremba, Ilya Sutskever, Joan Bruna, Dumitru Erhan, Ian Goodfellow, Rob Fergus

Towards Evaluating the Robustness of Neural Networks

Nicholas Carlini, David Wagner

Towards Deep Learning Models Resistant to Adversarial Attacks

Aleksander Madry, Aleksandar Makelov, Ludwig Schmidt, Dimitris Tsipras, Adrian Vladu

Stealing Machine Learning Models via Prediction APIs

Florian Tramèr, École Polytechnique Fédérale de Lausanne (EPFL); Fan Zhang, Cornell University; Ari Juels, Cornell Tech; Michael K. Reiter, The University of North Carolina at Chapel Hill; Thomas Ristenpart, Cornell Tech

Practical Black-Box Attacks against Machine Learning

Nicolas Papernot, Patrick McDaniel, Ian Goodfellow, Somesh Jha, Z.Berkay Celik, and Ananthram Swami

Model Inversion Attacks that Exploit Confidence Information and Basic Countermeasures.

M. Fredrikson, S. Jha, T. Ristenpart

DolphinAtack: Inaudible Voice Commands

Guoming Zhang, Chen Yan, Xiaoyu Ji, Taimin Zhang, Tianchen Zhang, Wenyuan Xu

Journalist: Yulong Cao | Editor: Hao Wang, Michael Sarazen

0 comments on “David Wagner on Adversarial Machine Learning at ACM CCS’17”