In this blog, some experts from different fields stated their concerns that with the rapid rise of artificial intelligence in the next few decades, intelligent robots would take over the human world and extinguish the entire human culture.

Dr. Ben Garrod, an evolutionary biologist at Anglia Ruskin University, explained the reasons why the robot apocalypse may come true. The first concern is the acceleration of the robot evolution. It takes hundreds of thousands of years for human beings to evolve from apes to modern human armored with high-tech tools. However, for robots, it only takes decades due to their ultra-fast computational speed. Dr. Garrod defined the robots as the invasive species which may endanger, or even erase, the human culture, like in the movie “I, Robot”. Without knowing the stop sign of robot development, Dr. Garrod warned that the robots may start to obtain the ability to make conscious decisions. Making conscious decisions is the clear line between a normal human and an artificial robot. Dr. Garrod also stated that the most dangerous issue is neither the robots will look like us or will act like us, but rather they start to think like us.

The blog also provided an example with Erica, which gained a lot of fame these days. Shown in Figure 1 with her creator, Dr. Hiroshi Ishiguro, Erica was described by Dr. Ishiguro as “the most beautiful and most human-like autonomous humanoid android in this world.” His objective is to create a robot which has abilities to think and act independently, and so did Erica, except that Erica cannot move her body. While she is not able to act like a human, she can think, answer and evade questions based on her own thoughts.

Figure 1.

Dr. Garrod is not the only one holding this view. Dr. Owen Holland, professor of cognitive robotics, believed that the beauty of the entire human race is all based on consciousness, and making conscious decisions drives humans to think. Some experts suggested applying some measures to prevent robotic takeover and constraint the development of robots. Experts from University of Oxford fabricated recorders which were used to equip on robots and capture their behaviors, so that analysts could obtain more information in case of malfunction.

Technical Comment

What Dr. Garrod and other experts are afraid of is exactly what happened in most of the Sci-Fi, robotic retaliation movies: humans did not have much power or control over robots with high-intelligence, and then one day, the robots started to go south and attack the human race without any indication.

But how far until we reach that point? Tesla still struggled with phase 2 in the field of auto-driving. Amazon Go stores are still under development and testing. The most recent state of the art, Sophia, shown in Figure 2, still needs more work done on language processing, although her human-like expressions are astonishingly real.

Figure 2.

Last month, Mark Zuckerberg and Elon Musk had a debate about the same issue as well, and their tweets are about to escalate this concern.

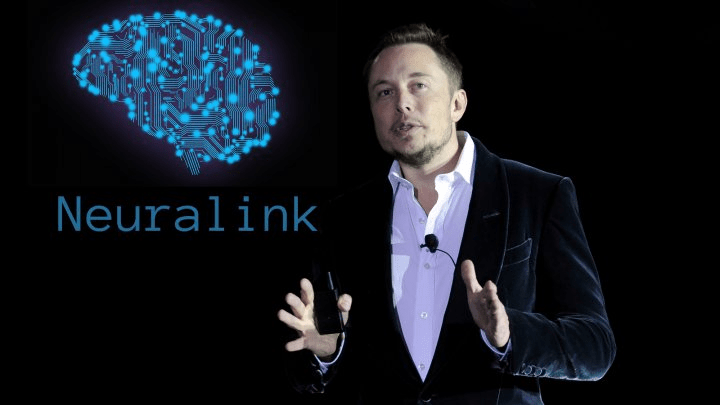

Elon Musk believed that human beings would have to evolve with computers as well before the robots start the war, so he founded another company, Neuralink, adding to his empire.

The objective of Neuralink is to develop ultra high bandwidth brain-machine interfaces (BMI) to connect humans and computers. Humans are able to process and receive information as fast as robots can, so robots and humans are on the same level. From this point, experts are divided into two leagues, one striving for robotic technology, and others for BMI.

One of the frontiers in BMI is BrainCo and BrainRobotics founded by Bicheng Han at Harvard University. Their objectives are all focused on enhancing the capabilities of the human brain. Personally speaking, robotic development will not stop simply because of its potential danger to human, take the atomic bombs and nuclear power for example. But it benefits companies like Neuralink to push the autonomous technology into the next level.

Reference:

https://singularityhub.com/2017/07/05/what-these-androids-can-teach-us-about-being-human/

http://www.hansonrobotics.com/robot/sophia/#iLightbox[33cd7a23b629e73ecb2]/0

http://www.marketwatch.com/story/elon-musk-mark-zuckerberg-spar-on-ai-2017-07-25

http://thecraft.info/2017/04/21/neuralink-looking-fordward-connect-brain-machine/

Blog Author: Frances Bloomfield

Author: Bin Liu | Editor: Zhen Gao | Localized by Synced Global Team: Xiang Chen

0 comments on “Experts Warn Robots Are Growing in Consciousness and Should Be Classified as an “Invasive Species””