Intro & Abstract

Computer vision has been an area of focus in industry in recent years and received substantial development. The deep learning algorithm has become comparatively mature in image classification tasks and produces intelligent applications in many fields. The following two talks cover the hardware innovations that advanced computing power and a new algorithm in approaching facial expression analysis.

Deep Learning in Industry with NVIDIA

Major Idea

Today’s advanced deep neural networks use algorithms, big data, and the computational power of GPUs to learn at high speed, accuracy and scale. Deep learning has shown its advantages over other traditional algorithms in solving many big data problems such as computer vision and speech recognition.

Key points

- Why do we need GPUs in deep learning?

Traditional computer vision require domain experts to carefully design feature detectors. Thus, the quality of the design is measured by a patchwork of algorithms, and improvements require both experts and time. Deep learning, on the other hand, learn features through large amount of data. Therefore, the size of the dataset and available computing power are only two factors limiting quality. The nature of neural networks — large amount of matrix-like calculations for each neuron and the high-degree of parallelism in architecture – allow GPUs to perform very well in terms of computing. Since the training part of neural networks would consume lots of computational resources, powering deep learning with GPUs would substantially accelerating the process.

- NVIDIA Deep Learning SDK

The NVIDIA Deep Learning SDK provides powerful tools and libraries for designing and deploying GPU-accelerated deep learning applications. It includes libraries for deep learning primitives, inference, video analytics, linear algebra, sparse matrices, and multi-GPU communications.

- Example: Deep Learning Inference Engine (TensorRT): TensorRT can be used to rapidly optimize, validate and deploy trained neural network for inference to hyperscale data centers, embedded, or automotive product platforms. TensorRT uses INT8 optimized precision could deliver 3x more throughput, using 61% less memory on applications that rely on high accuracy inference.

- Deep Learning Use Case

- HIKVISION — Surveillance

- Generally speaking, deep learning did a better job at recognizing objects across all the categories. Most importantly, deep learning could understand small and subtle information that human cannot make decisions, such as whether phone calling behaviors exists.

- Digital Business/AI Enterprise

- With deep learning powered by GPUs, linking product pictures to online sellers could be faster and more accurate. Moreover, as the amount of data businesses process continues to grow rapidly, digital centers that are in charge of business analysis requires evermore computing power, which could be realized by using GPUs.

- Medical Care

- Pilot projects such as MARVIN by SocialEyes could alter the way that medical service is provided and benefit underprivileged people and areas where service is hard to deliver. For MARVIN, images of a patient’s eye could be transferred into a GPU-enabled device. Deep learning then comes in and intelligently analyze the position of retina and search for possible diseases.

Followed up Questions

- Could we achieve equivalent accuracy with smaller amount of data?

- Could the learning be free from tagged data and turn to unsupervised learning?

- Could specialized deep learning be modified towards a wider, progressive intelligence?

Fine-grained Emotional Profiling

Major Idea

Human decisions and actions are driven by emotions, and facial expressions reveal many of these emotions. This talk presented the latest research and insights on facial expression analysis by Advanced Digital Sciences Center (ADSC)*. Different from existing approaches, a new perspective called fine-grained emotional profiling avoids the classification of emotions into predefined categories and uses a dimensional paradigm instead.

*Advanced Digital Sciences Center (ADSC) is a joint research centre by the University of Illinois at Urbana-Champaign (UIUC) and A*STAR that aims for research and technology transfer.

Key Points

- Importance of Facial Expression Analysis

Emotions are very important, sometimes deterministic, in our daily decisions and actions. Therefore, companies and industry care for interpreting emotions of customers so they could get feedback towards the products and search for potential buyers. Facial expressions offer information of the internal state and could be a feasible way to profile people.

- Transfer Learning

A major assumption in many traditional machine learning algorithms is that the training and test data should be in the same feature space and have the same distribution. When the distribution changes, most statistical models need to be rebuilt from scratch using newly collected training data. However, in many real-world applications, it is expensive or impossible to recollect the needed training data and rebuild the models. For example, we sometimes have a classification task in one domain of interest, but we only have sufficient training data in another domain of interest, where the latter data may be in a different feature space or follow a different data distribution. In such cases, knowledge transfer or transfer learning between task domains would greatly improve the performance of learning by avoiding much expensive data-labeling efforts and preventing the overfitting problems seen in small datasets.

- Classic Facial Expression Analysis

Classic facial expression analysis involves deep learning with Convolutional Neural Networks (CNNs). The analysis starts from face pixels to automatically predict which label describes the current emotional state the best.

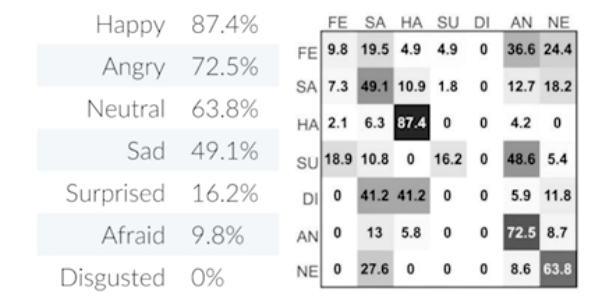

In the Emotion Recognition in the Wild Challenge 2015 (EmotiW 2015), teams were asked to assign a single emotion label to the video clip from the six universal emotions (Anger, Disgust, Fear, Happiness, Sad and Surprise) and Neutral. Since images were taken from movies and reality shows, variations in headpose, illumination, image resolution etc. could propose a real challenge. Also, training and validation datasets were very small, which prevented deep learning from generalizing and predicting unseen images correctly.

ADSC devised a 2-stage supervised fine-tuning scheme which involved transfer learning from a very large generic dataset (ImageNet). The first tuning was done with a large dataset that contains small, low-resolution images (FER2013). The second tuning was done with a smaller data set with images of higher size and quality.

The scheme produced an accuracy of 55.6% and earned ADSC a third place for the contest. However, researchers have noticed some difficulties with certain expressions (Surprised, Afraid and Disgusted shown below) and it is the case for all the winning teams.

- Fine-grained Facial Expression Analysis

Considering the fact that it is very difficult to differentiate among negative expressions, and variations of emotion could not be reflected by simple classifications, researchers at ADSC rendered a regression model of emotions.

- Emotion Continuum

Emotion Continuum is a concept inspired by Russell’s circumplex model of affect. Instead of classifying emotions into the seven prototypical universal categories, three continuous attributes are used as measures so that subtle changes could be reflected.

- Three Emotion Attributes:

- Arousal: How energetic/passive is the emotion

- Valence: How positive/negative is the emotion

- Intensity: How different from neutral

- ADSC’s Affective Technology

ADSC team is looking at a host of several applications of their affective technology. Advertising agencies could measure the reactions of people when they see a billboard or watch a commercial to determine ad effectiveness. Human resources could record the expressions of job candidates during an interview and quantify the expressions of the potential employee. The ability to analyze the expressions of a crowd could prove useful during political speeches, or anywhere with a large crowd.

Instead of dealing with pixels, ADSC’s affective technology tracks 49 facial points and applies statistical machine learning. Training dataset was taken from psychophysical validation studies. Since the technology uses statistical machine learning technique instead of deep learning, the task could be done in a real-time fashion in a typical PC without GPUs. A mean accuracy of 86% is achieved.

- https://www.youtube.com/watch?v=r7ZpwhVcuQ8 (a short video demonstration)

- Comparisons between two facial expression analysis techniques

- Deep learning and statistical machine learning are complementary to each other

- They have their own advantages in different aspects: dataset, computing power and explanatory power

Related Paper/Resources ( Suggested Readings )

https://adsc.illinois.edu/news/newly-developed-software-can-interpret-emotions-real-time

Paltoglou, G. & Thelwall, M. Seeing Stars of Valence and Arousal in Blog Posts. IEEE Trans. Affect. Comput. 4, 116–123 (2013).

Vastenburg, M., Romero Herrera, N., Van Bel, D. & Desmet, P. PMRI: Development of a Pictorial Mood Reporting Instrument. in CHI ’11 Extended Abstracts on Human Factors in Computing Systems 2155–2160 (ACM, 2011). doi:10.1145/1979742.1979933

Yosinski, J., Clune, J., Bengio, Y. & Lipson, H. in Advances in Neural Information Processing Systems 27 (eds. Ghahramani, Z., Welling, M., Cortes, C., Lawrence, N. D. & Weinberger, K. Q.) 3320–3328 (Curran Associates, Inc., 2014).

Ng, H.-W., Nguyen, V. D., Vonikakis, V. & Winkler, S. Deep Learning for Emotion Recognition on Small Datasets Using Transfer Learning. in Proceedings of the 2015 ACM on International Conference on Multimodal Interaction 443–449 (ACM, 2015). doi:10.1145/2818346.2830593

Pan, S. J. & Yang, Q. A Survey on Transfer Learning. IEEE Transactions on Knowledge and Data Engineering 22, 1345–1359 (2010).

Analyst: Yuka Liu | Localized by Synced Global Team : Xiang Chen

0 comments on “Deep Learning in Industry with NVIDIA/Fine-grained Emotional Profiling”